Fine-Tuning Platform Upgrades: Larger Models and New Features

Model customization is an extremely versatile tool that comes in handy for many kinds of AI developers. For instance, you can make the strongest open LLMs even better on business-critical tasks by fine-tuning them on domain-specific data. Moreover, it's possible to drastically reduce both inference costs and latency via training smaller but equally capable models.

Our goal with the Together Fine-Tuning Platform is to streamline the process of model training for AI developers, helping them quickly build the best models for their applications by offering convenient and affordable tools. This release showcases a new package of improvements, drastically expanding the scope of what you can train: from the native support for over a dozen latest LLMs to new DPO options and better integrations with the Hugging Face Hub.

In 2025, we have seen a great number of models with over 100B parameters released to the public. These models, such as DeepSeek-R1, Qwen3-235B, or Llama 4 Maverick, offer a dramatic jump in capabilities, sometimes rivaling even the strongest proprietary models on certain tasks. With fine-tuning, you can further refine the abilities of these models, steering them towards the precise behavior you need.

Now, you can train the latest large models on the Together Fine-Tuning Platform! By implementing the latest training optimizations and carefully engineering our platform, we made it possible to easily train models with hundreds of billions of weights at a low cost. We have recently announced the general availability of OpenAI's gpt-oss fine-tuning on our platform, and now we support even more model families.

With recent progress on tasks such as long-document processing, reliable handling of long contexts is as important as ever. We have overhauled our training systems and identified ways to increase the maximum supported context length for most of our models — at no additional cost to you. On average, you can expect 2x-4x increases to the context length.

Today, we are making this plethora of models from the Hugging Face Hub available for fine-tuning through Together AI. If there exists a model that's already adapted for a relevant task, it can be used for further training and improvement.

Enhance Batch Inference API: New UI and Model Support

Together AI Achieves 90% Faster Training with NVIDIA Blackwell

Related articles

Models Without Labels: Training a Classifier with Minimal Data

The study shows how a minimal number of labels can aid in training a classifier.

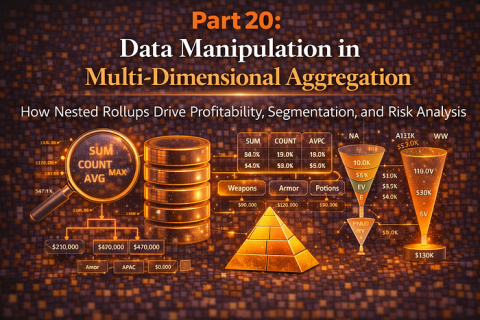

Advanced Data Aggregation Techniques for Business Analytics

Analysis of advanced data aggregation methods for business analytics and risk management.

A Practical Guide to Memory for Autonomous LLM Agents

Exploring the memory architecture for autonomous LLM agents and its impact on performance.