Announcing Replicate's remote MCP server for applications

Last month, we quietly published a local MCP server for Replicate’s HTTP API. Today, we’re introducing a hosted remote MCP server that you can use with apps like Claude Desktop, Claude Code, Cursor, and VS Code. This empowers you to explore and run all of Replicate’s HTTP APIs through a familiar chat-based natural language interface. To get started, head over to mcp.replicate.com.

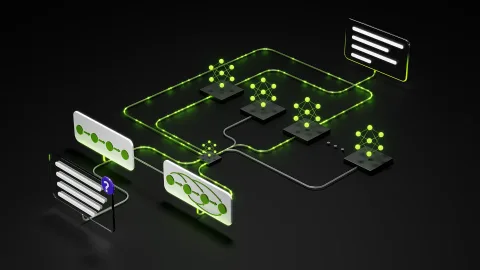

MCP stands for Model Context Protocol. It’s a standard developed at Anthropic for giving language models access to external tools. This is commonly referred to as “tool use” or “function calling.” This significantly enhances the power of language models, enabling them to access external tools and data sources rather than relying solely on their internal knowledge.

Once you’ve installed the server, you can ask questions in Claude or Cursor, such as: Find models: “Find popular video models on Replicate that allow a starting frame as input.” Compare models: “What are the differences between veo 3 and veo 3 fast on Replicate?” Run models: “Make a video of ‘a tortoise and a hare running in the Olympic 100m’ using veo 3 fast.”

Our official MCP server is available as a hosted service as well as a public npm package that you can run locally. The recommended option is the remote MCP server, which is the easiest and suitable for most users. You simply add the hosted server URL to your apps like Claude or Cursor. After installing the server, you’ll be directed to a web-based authentication flow where you can provide a Replicate API key for the server to use on your behalf.

The local MCP server can be run on your machine, requiring only a recent version of Node.js. To install and run the server locally, check out the documentation. Some HTTP APIs can return very large JSON responses, and it’s easy to fill up a model’s context window with too much data. For instance, Replicate’s search API returns paginated lists of models with extensive metadata for each model.

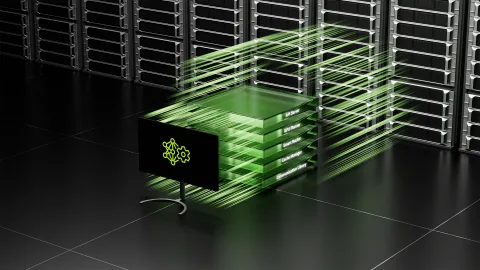

Our new hosted MCP server runs on Cloudflare Workers, making it easy to deploy and scale. We utilize Cloudflare’s OAuth Provider Framework for Workers to keep your Replicate API token secure. When you connect your AI tools to Replicate, you visit a web page where you enter your Replicate API token, which is stored in Cloudflare’s KV storage.

Create Music with Lyria 3, Our Newest Generation Model

Explore the capabilities of Seedream 5.0 for image creation

Похожие статьи

Build NVIDIA Nemotron 3 Agents for Safe and Natural Interactions

NVIDIA introduces Nemotron 3 models for creating safe and intelligent agents with high efficiency.

Deploying Disaggregated LLM Inference Workloads on Kubernetes

Explore disaggregated LLM deployment on Kubernetes for resource optimization.

Anthropic faces data leak issues amid rising AI prominence

Anthropic faced data leaks revealing key aspects of its technologies.