IBM Releases Granite 4.0 for Fast Language Model Usage

IBM has launched Granite 4.0, their latest family of open-source small language models designed for speed and low cost. The Granite 4.0 models utilize a hybrid architecture that requires less memory than traditional models, allowing them to run on standard consumer GPUs instead of expensive server hardware. These models are well-suited for document summarization, RAG systems, and AI agents.

The ibm-granite/granite-4.0-h-small model features 30 billion parameters and is now available on Replicate. You can start using Granite models immediately via an API. For instance, using cURL, you can execute a POST request with authorization, specifying the necessary parameters for processing.

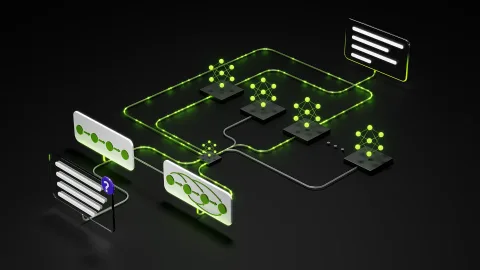

Granite models are highly performant due to a hybrid design that combines the linear-scaling efficiency of Mamba-2 with the precision of Transformers. Mamba-2 processes sequences linearly, making it more efficient for long inputs, such as documents with hundreds of thousands of tokens. Transformer blocks complement this architecture by better supporting tasks that require long-context reasoning.

Some Granite 4.0 models also employ a mixture of experts (MoE) routing strategy. This setup divides the model into several 'experts', allowing only the necessary parameters to be activated for a specific request. For example, Granite 4.0 Small has 32 billion parameters, of which only 9 billion are activated for inference.

Granite models are designed for practical applications, not just demonstrations. They are lightweight and efficient, making them suitable for summarizing long documents, building systems that extract answers from large datasets, and deploying models on local devices or edge hardware where cloud access is limited.

Granite models are open source and released under the Apache 2.0 license, allowing their use for both commercial and non-commercial projects without restrictions. You can also modify the models as you see fit and release those changes under your own terms. This openness makes Granite a practical choice for companies needing compliance, security, or customization.

For more details, check out IBM's documentation on deployment, fine-tuning, and integration patterns. IBM has also developed a LangChain integration for Replicate to simplify working with Granite models.

Create Music with Lyria 3, Our Newest Generation Model

Explore the capabilities of Seedream 5.0 for image creation

Похожие статьи

Build NVIDIA Nemotron 3 Agents for Safe and Natural Interactions

NVIDIA introduces Nemotron 3 models for creating safe and intelligent agents with high efficiency.

Deploying Disaggregated LLM Inference Workloads on Kubernetes

Explore disaggregated LLM deployment on Kubernetes for resource optimization.

Anthropic faces data leak issues amid rising AI prominence

Anthropic faced data leaks revealing key aspects of its technologies.