Optimize Performance with FlashAttention-4

Introducing FlashAttention-4, a new algorithm and kernel co-design aimed at maximizing overlap between matrix multiplications and other resource bottlenecks. Modern accelerators like Blackwell GPUs continue the trend of asymmetric hardware scaling, where tensor core throughput increases much faster than other resources like shared memory bandwidth and special function units for transcendental operations.

This scaling asymmetry has significant implications for optimizing complex kernels like attention for the Blackwell architecture. At its core, attention involves two GEMMs with softmax in between, which also includes substantial data movement, synchronization, and layout transformations. A naive view might suggest that GEMM speed completely controls kernel performance, but in fact, the main performance bottleneck lies in the SFUs for softmax during forward computation and shared memory traffic during backward computation.

FlashAttention-4 achieves up to 1605 TFLOPs/s on the B200 with BF16, making it 1.3 times faster than cuDNN version 9.13 and 2.7 times faster than Triton. Our main algorithmic and kernel co-design ideas include new software pipelines for maximum overlap, software emulation of the exponential function, and storing intermediate results in tensor memory to relieve shared memory traffic.

On Blackwell, each of the 148 SMs has 256 KB of tensor memory, providing synchronous storage for intermediate data. The fully asynchronous fifth-generation tensor cores ease register pressure and make larger tiles and deeper pipelines practical. This also makes warp specialization more viable, allowing some warps to move tiles while others perform matrix multiplication.

As a result, the new load scheduling and programming reduce load imbalance and enhance performance. FlashAttention-4 employs a new programming approach that optimizes performance by more effectively utilizing Blackwell's resources, ultimately leading to significant performance improvements in executing complex computations.

Z.ai Launches GLM-5V-Turbo: A New Multimodal Vision Coding Model

How AI is Changing Research: More Papers, Less Quality

Похожие статьи

Exploring p-hacking: How statistics can deceive you

p-hacking: how data manipulation can distort scientific results.

MIT Researchers Use AI to Identify Atomic Defects in Materials

MIT has developed an AI model for accurate measurement of atomic defects in materials.

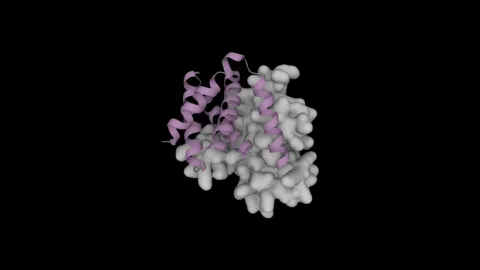

Design Protein Binders with NVIDIA's Proteina-Complexa

NVIDIA introduces Proteina-Complexa for designing high-affinity protein binders and enzymes.