Understanding the Inversion Error in Safe AGI

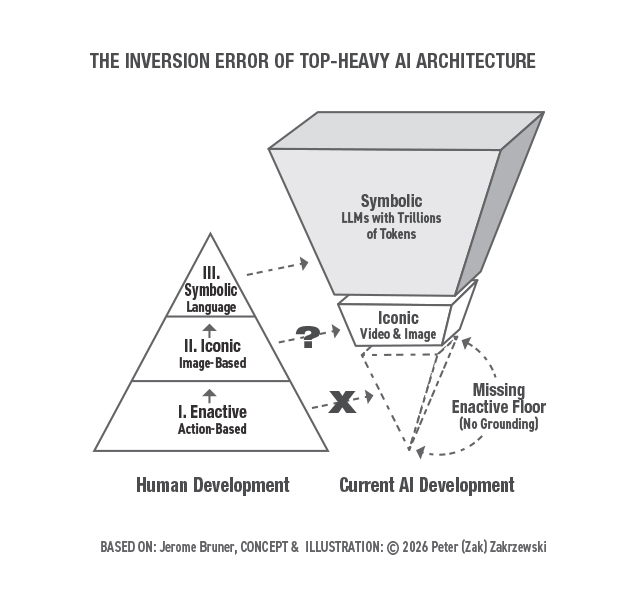

This article explores the Inversion Error that arises in artificial intelligence systems like Google Gemini. It examines how the lack of physical experience and interaction with reality leads to issues with understanding and logical reasoning. AI systems, despite their achievements, continue to face challenges in causal understanding and logical deduction, highlighting the need for a deeper analysis of their architecture.

Research conducted by the Google DeepMind team shows that even with high scores on tests like the Massive Multitask Language Understanding (MMLU), models are incapable of true understanding. They demonstrate fluency without grounding, which leads to the Inversion Error described in the article.

In the process of creating a physically grounded model such as Gemini Robotics 1.5, researchers encountered task generalization issues, confirming the existence of a structural gap in the system's architecture. Despite attempts to implement 'Embodied Thinking,' which allows models to generate language-based reasoning before taking action, the Inversion Error remains a pressing issue.

The article argues that addressing this problem requires a structural intervention rather than merely scaling up or applying symbolic reasoning to physical actions. It is crucial to understand that the Inversion Error arises from building the peak of synthetic cognition without the necessary base.

According to psychologist Jerome Bruner's theory, human cognitive development progresses through three stages: enactive, iconic, and symbolic. Each of these stages is dependent on the previous one, and removing the base leads to a system that cannot adequately verify its outputs.

Optimize UC Berkeley's Machine Learning Course for the AI Age

Salesforce unveils AI-enhanced Slack with 30 new features

Похожие статьи

Build NVIDIA Nemotron 3 Agents for Safe and Natural Interactions

NVIDIA introduces Nemotron 3 models for creating safe and intelligent agents with high efficiency.

Deploying Disaggregated LLM Inference Workloads on Kubernetes

Explore disaggregated LLM deployment on Kubernetes for resource optimization.

Anthropic faces data leak issues amid rising AI prominence

Anthropic faced data leaks revealing key aspects of its technologies.