Create Consistent Characters with New Models

Until recently, the best way to generate images of a consistent character was from a trained lora. You needed to create a dataset of images and then train a FLUX lora on them. Looking back further, one might recall using a ComfyUI workflow that combined SDXL, controlnets, IPAdapters, and some non-commercial face landmark models. However, things have become remarkably simpler today. We now have a choice of state-of-the-art image models that can accurately perform this task from a single reference.

As of July 2025, there are four models on Replicate that can create realistic and accurate outputs from a single reference. In order of release: OpenAI's gpt-image-1, Runway's Gen-4 Image, Black Forest Labs' FLUX.1 Kontext, and Bytedance's SeedEdit 3. Since this blog post was written, two new models have also been released: Ideogram's Character and Runway's Gen-4 Image Turbo. FLUX.1 Kontext comes in several flavors: pro, max, and dev, with the dev version being more controllable and fine-tunable but less powerful.

To assist in writing this post, I put together a little Replicate model to make it easy to compare outputs. Our comparison model runs FLUX.1 Kontext, SeedEdit 3.0, gpt-image-1, and Runway's Gen-4 in parallel. Price and speed are also essential factors: gpt-image-1 is the slowest and most expensive model, while Kontext Dev is the cheapest and fastest. However, trade-offs exist in quality, which we will explore in more detail.

When comparing how well each model preserves a character's identity, we utilize gpt-image-1 with high quality and fidelity settings, and stick with FLUX.1 Kontext Pro as the best compromise between quality and speed. The examples showcasing photographic accuracy reveal that Gen-4 shines in composition and character accuracy.

For scene tweaks, where most of the original composition is kept while altering a small part, all models handle this well. However, in more challenging requests, such as changing a character's appearance, results can vary. Only SeedEdit 3 and gpt-image-1 managed to handle a clean-shaven request correctly, but gpt-image-1 ended up creating a completely different person.

In conclusion, we found that Kontext Pro is versatile and can yield fabulous results, but often too many artifacts appear around the face, making the image unusable. gpt-image-1 consistently adds distinctive features but may not always provide the desired quality.

Create Music with Lyria 3, Our Newest Generation Model

Explore the capabilities of Seedream 5.0 for image creation

Похожие статьи

Anthropic faces data leak issues amid rising AI prominence

Anthropic faced data leaks revealing key aspects of its technologies.

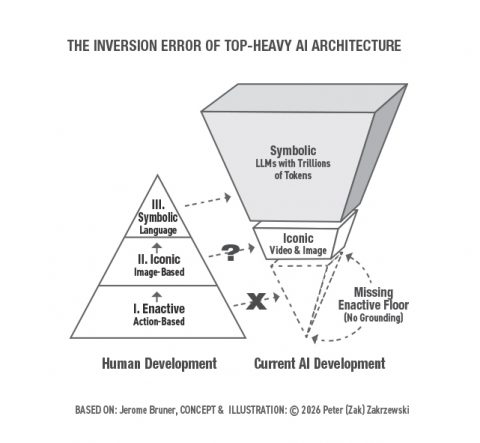

Understanding the Inversion Error in Safe AGI

Exploring the Inversion Error in AI and the need for physical experience for safe AGI.

How a Model 10,000× Smaller Can Outsmart ChatGPT

A model 10,000 times smaller than ChatGPT can outsmart it by reasoning.