Deploying Disaggregated LLM Inference Workloads on Kubernetes

Explore disaggregated LLM deployment on Kubernetes for resource optimization.

Explore disaggregated LLM deployment on Kubernetes for resource optimization.

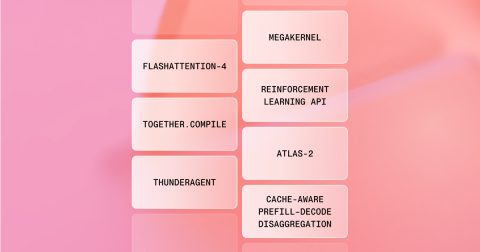

At AI Native Conf, Together unveiled new AI technologies and products.

The Together AI team achieves breakthroughs in GPU optimization and kernel development.

Microsoft is implementing AI to optimize learning and research processes, reducing team coordination time to one day.