Amazon Bedrock simplifies customization of Nova models for businesses

Amazon Bedrock offers straightforward ways to customize Nova models to meet specific business needs. As companies scale their AI deployments, they require models that reflect their unique knowledge and workflows. This may involve maintaining a consistent brand voice in customer communications, managing complex industry-specific workflows, or accurately classifying intents in airline reservation systems.

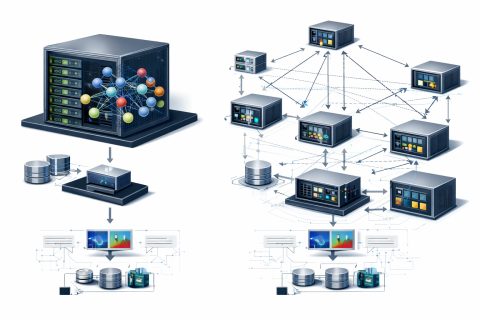

Techniques such as prompt engineering and Retrieval-Augmented Generation (RAG) provide models with additional context to enhance task performance, but they do not instill native understanding into the model. Amazon Bedrock supports three customization approaches for Nova models: supervised fine-tuning (SFT), which trains the model on labeled examples; reinforcement fine-tuning (RFT), which uses a reward function to guide learning; and model distillation, which transfers knowledge from a larger teacher model to a smaller, faster student model.

Each of these methods embeds new knowledge directly into the model weights, resulting in faster inference, lower token costs, and higher accuracy on critical business tasks. Amazon Bedrock automatically manages the training process, requiring only that you upload your data to Amazon S3 and initiate the job through the AWS Management Console, CLI, or API. Deep machine learning expertise is not necessary.

Nova models support on-demand invocation of customized models, allowing you to pay only for actual calls rather than for more expensive allocated capacity. In this article, we will walk you through a complete implementation of model fine-tuning in Amazon Bedrock using Nova models, demonstrating each step through an intent classifier example that achieves superior performance on a domain-specific task.

You will learn to prepare high-quality training data that drives meaningful model improvements, configure hyperparameters to optimize learning without overfitting, and deploy your fine-tuned model for enhanced accuracy and reduced latency. We will show you how to evaluate your results using training metrics and loss curves. Customization techniques, such as context engineering, allow for immediate information updates without training; however, they incur context window token costs on every invocation, which can increase cumulative costs and latency over time.

NVIDIA Integrates Physical AI Capabilities into Apps with Omniverse

Using human-in-the-loop constructs in healthcare and life sciences

Related articles

Meta Researchers Introduce Hyperagents for Self-Improving AI

Meta researchers have introduced hyperagents that enhance AI for non-coding tasks.

OpenAI updates its Agents SDK to help enterprises build safer solutions

OpenAI has updated its Agents SDK, adding new features for businesses.

Optimizing GPU Usage for Language Models and Reducing Costs

Optimizing GPU usage for language models reduces costs and increases efficiency.