Optimizing GPU Usage for Language Models and Reducing Costs

Recent research indicates a significant difference in resource requirements when working with large language models (LLMs) during the prefill and decode stages. During the prefill phase, where the model reads the entire input in parallel and fills the cache, up to 92% of GPU computational power is utilized. However, ten milliseconds later, during decoding, performance drops to 28%, leading to inefficient resource use.

In a recent project involving a large enterprise for real-time inference, where 64 H100 GPUs were employed, it was found that only about 20 of them were effectively doing meaningful work for 90% of the request processing time. This creates issues for finance teams, as the GPU costs appear as if they were training a model, while in reality, only inference is being performed.

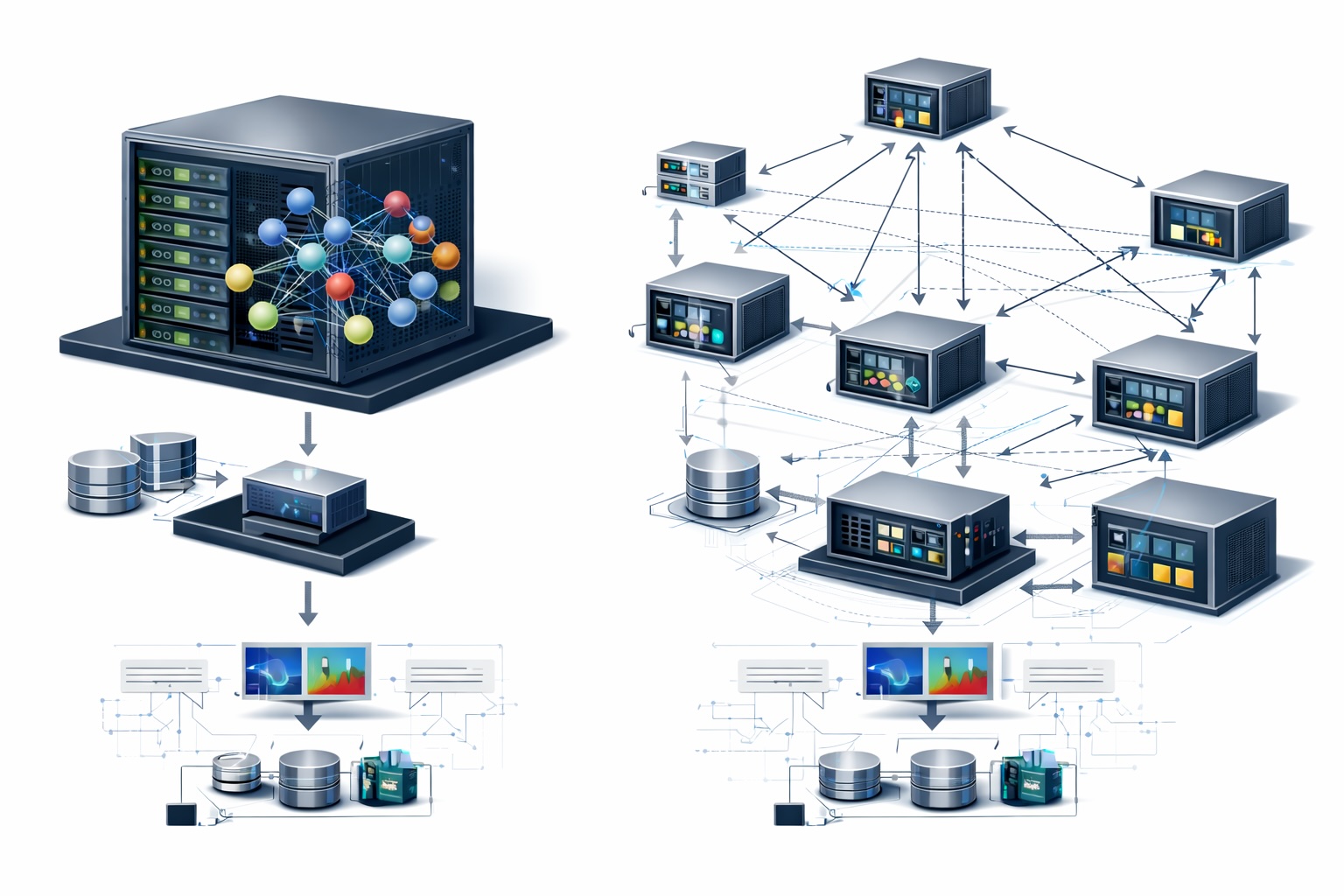

Separating the prefill and decode phases onto different hardware resources can significantly reduce costs. This approach was proposed in the DistServe paper from UC San Diego and is already successfully implemented by companies like Meta and LinkedIn. Using specialized GPUs for each phase helps avoid resource overprovisioning and enhances overall efficiency.

The standard practice of running both phases on the same GPU pool leads to low efficiency. For instance, a GPU optimized for prefill becomes overpowered for decoding, while a GPU suitable for decoding cannot keep up with prefill demands. This results in paying for the worst-case scenario in both directions.

According to a technical analysis by InfoQ, prefill achieves 90-95% utilization, while decoding drops to 20-40%. This confirms the necessity of splitting the workload across specialized hardware resources for each stage, which can lead to significant savings.

Rede Mater Dei de Saúde implements AI agents for revenue optimization

Gemini 3.1 Flash TTS enhances AI speech quality and control

Related articles

OpenProtein.AI provides biologists with protein design tools

OpenProtein.AI offers biologists tools for effective protein design.

OpenAI Unveils GPT-Rosalind: New AI for Life Sciences Research

OpenAI has introduced GPT-Rosalind, a new AI model to accelerate drug discovery and genomics research.

UC Berkeley and UCSF Researchers Use AI to Transform Medical Imaging

Researchers from UC Berkeley and UCSF are developing AI to improve medical imaging.