Rede Mater Dei de Saúde implements AI agents for revenue optimization

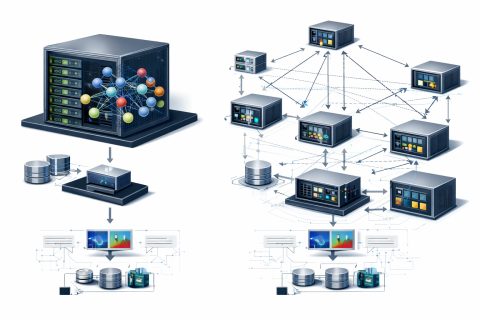

The growing adoption of multi-agent AI systems is transforming critical operations in healthcare. In large hospital networks, where thousands of decisions directly impact cash flow, service delivery times, and the risk of claim denials, the ability to monitor and govern AI agents has become essential for operational sustainability. Rede Mater Dei de Saúde is implementing a suite of 12 AI agents using Amazon Bedrock AgentCore.

With 45 years of history, Rede Mater Dei is one of Brazil's most respected healthcare institutions, operating in cities like Belo Horizonte, Betim, and Nova Lima. The organization combines technology, advanced analytical intelligence, and high-complexity care to deliver patient-centered outcomes and operational excellence.

In 2024, claim denials in Brazil reached alarming levels, posing a significant challenge for institutions like Rede Mater Dei. Operational processes were typically handled by hundreds of staff, and fragmented processes were characterized by unstructured and dispersed data, leading to inconsistencies and rework that negatively impacted the revenue cycle.

With support from A3Data and AWS, Rede Mater Dei launched a transformation program aimed at reducing the causes of denials and accelerating data analysis. This program features a complete suite of 12 AI agents designed to cover the entire hospital revenue cycle, operating autonomously and making decisions in a coordinated and auditable manner.

Among the first deployed agents are the Contracts Agent, which centralizes complex contractual rules, and the Authorization Agent, which automates requests and interactions with health insurers. All agents operate on Amazon Bedrock AgentCore, providing a secure environment for deploying and scaling AI agents.

The partnership with A3Data and AWS has allowed the creation of a critical governance structure for agents, forming the backbone of this successful initiative. This project is a pioneering effort in Latin America, testing a large-scale AI solution for high-impact applications in healthcare.

Accelerating LLM Decoding with Speculative Decoding on AWS

Optimizing GPU Usage for Language Models and Reducing Costs

Related articles

Meta Researchers Introduce Hyperagents for Self-Improving AI

Meta researchers have introduced hyperagents that enhance AI for non-coding tasks.

OpenAI updates its Agents SDK to help enterprises build safer solutions

OpenAI has updated its Agents SDK, adding new features for businesses.

Optimizing GPU Usage for Language Models and Reducing Costs

Optimizing GPU usage for language models reduces costs and increases efficiency.