Artificial Intelligence Learns to See in 3D and Understand Space

Artificial intelligence (AI) has made significant strides in understanding and interpreting images, but it still struggles with comprehending three-dimensional space. Current models, such as foundation models, can estimate depth and segment objects in images, yet they fail to fully grasp 3D space. The missing element is geometric fusion—a layer that bridges 2D AI predictions into coherent 3D semantic understanding.

AI can classify photographs, segment objects in street scenes, and generate photorealistic images of non-existent rooms. However, when it comes to physical space, such as determining which object is on which shelf, AI encounters difficulties. The models that dominate computer vision benchmarks operate in flatland and lack an innate understanding of the 3D world.

The gap between pixel-level intelligence and spatial understanding is a significant bottleneck for applying AI in the real world, including robots navigating warehouses and autonomous vehicles. This article explores the three layers of AI that are currently converging to achieve spatial understanding from ordinary photographs.

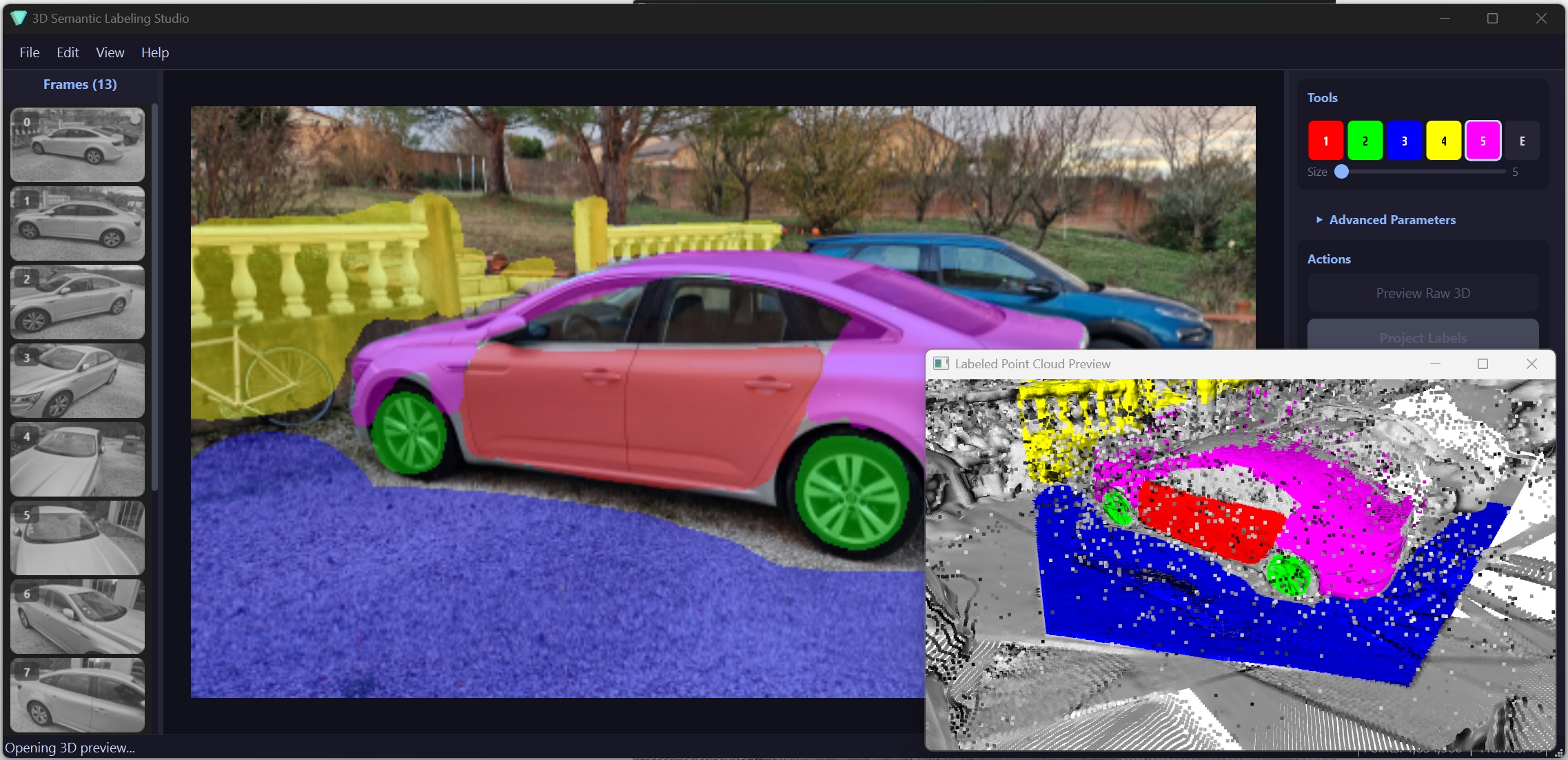

The process of annotating 3D data remains a complex challenge, even though reconstructing 3D geometry from photographs is already a solved problem. Models like Depth-Anything allow for the generation of dense 3D point clouds from a single video, but without semantic information, these data remain useless. To execute queries such as 'show me only the walls' or 'measure the floor area,' semantic labeling for each point is necessary.

Traditional methods require LiDAR scanners and manual annotation, making the process costly. Automated segmentation networks like PointNet++ can simplify the task, but they require labeled data, which is expensive and difficult to produce. Thus, despite the strengths of geometric reconstruction and semantic prediction, there is no universal way to connect them.

The question is not whether AI can understand 3D space, but how to bridge 2D predictions with 3D geometry. In the coming years, it is expected that three independent research threads will merge into a single powerful system for automatic spatial understanding.

Companies like Apple are building AI agents with limits

Overview of Voice Cloning Capabilities on Voxtral without an Encoder

Related articles

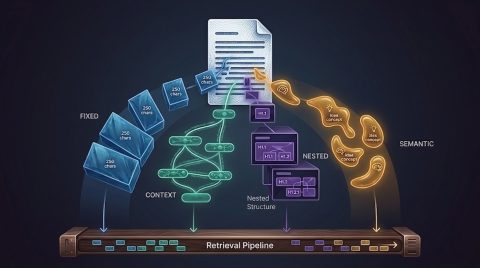

Error in RAG: How Incorrect Data Chunking Affects Outcomes

Incorrect data chunking can lead to system errors, reducing user trust.

Google launches new AI Mode for side-by-side web searching

Google has introduced a new AI Mode for side-by-side web searching.

Anthropic releases Claude Opus 4.7, narrowly retaking lead in LLM

Anthropic has released Claude Opus 4.7, surpassing competitors on key metrics.