Implement Self-Healing Neural Networks in PyTorch

In recent years, neural networks have become crucial tools across various fields; however, their performance can degrade due to changes in data. This phenomenon, known as model drift, can lead to significant accuracy loss. This article discusses a self-healing neural network implementation based on PyTorch that can detect drift, inject weight updates, and recover performance without the need for retraining or downtime.

Imagine your fraud detection model has been in production for two months, and its accuracy has dropped from 92.9% to 44.6% due to shifting transaction patterns. Typically, restoring accuracy takes time, leaving the model ineffective during this period. However, the self-healing neural network, utilizing the ReflexiveLayer adapter, can address this issue by updating a small part of the model in real-time.

The adapter operates in the background, ensuring that the inference process is not halted. It employs symbolic rules for weak supervision and a model registry for safe rollback, allowing up to a 27.8% accuracy recovery without altering the backbone weights. This is particularly critical in applications like fraud detection, where every decision counts.

Standard approaches to model drift, such as retraining on fresh labeled data or using ensembles, assume the availability of time and resources, which may not always be the case. Thus, the architecture of the self-healing model was designed considering these constraints to function effectively in the absence of labeled data and retraining time.

A key design choice is isolating adaptation within a single component, allowing the backbone model to remain unchanged. This prevents catastrophic forgetting, where neural networks lose previously learned behaviors when updated with new data. Consequently, the adapter can make corrections without affecting the primary model.

The accuracy recovery process involves two independent signals that regulate the frequency of self-healing activation. This helps avoid unnecessary computations and the accumulation of performance degradation. As a result, the self-healing neural network presents an effective solution for maintaining model performance in the face of changing data.

Become an AI Engineer Fast: Skills, Projects, Salary

Hershey Implements AI to Optimize Supply Chain Operations

Related articles

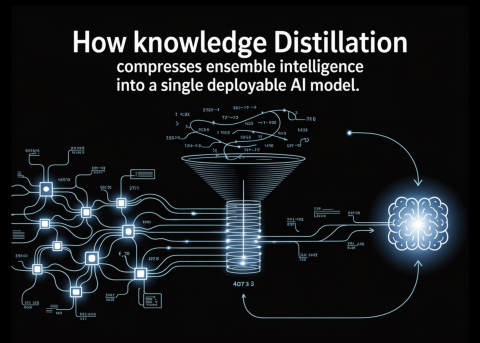

How Knowledge Distillation Compresses Ensemble Intelligence into a Single Deployable AI Model

Knowledge distillation allows creating compact AI models inheriting ensemble performance.

NVIDIA introduces AITune: a toolkit for optimizing PyTorch model inference

NVIDIA has introduced AITune, a tool for automating the optimization of PyTorch models.

OSGym: a new framework for managing 1,000+ OS replicas

OSGym is a new framework for training AI agents operating with OS, developed by researchers from MIT and other universities.