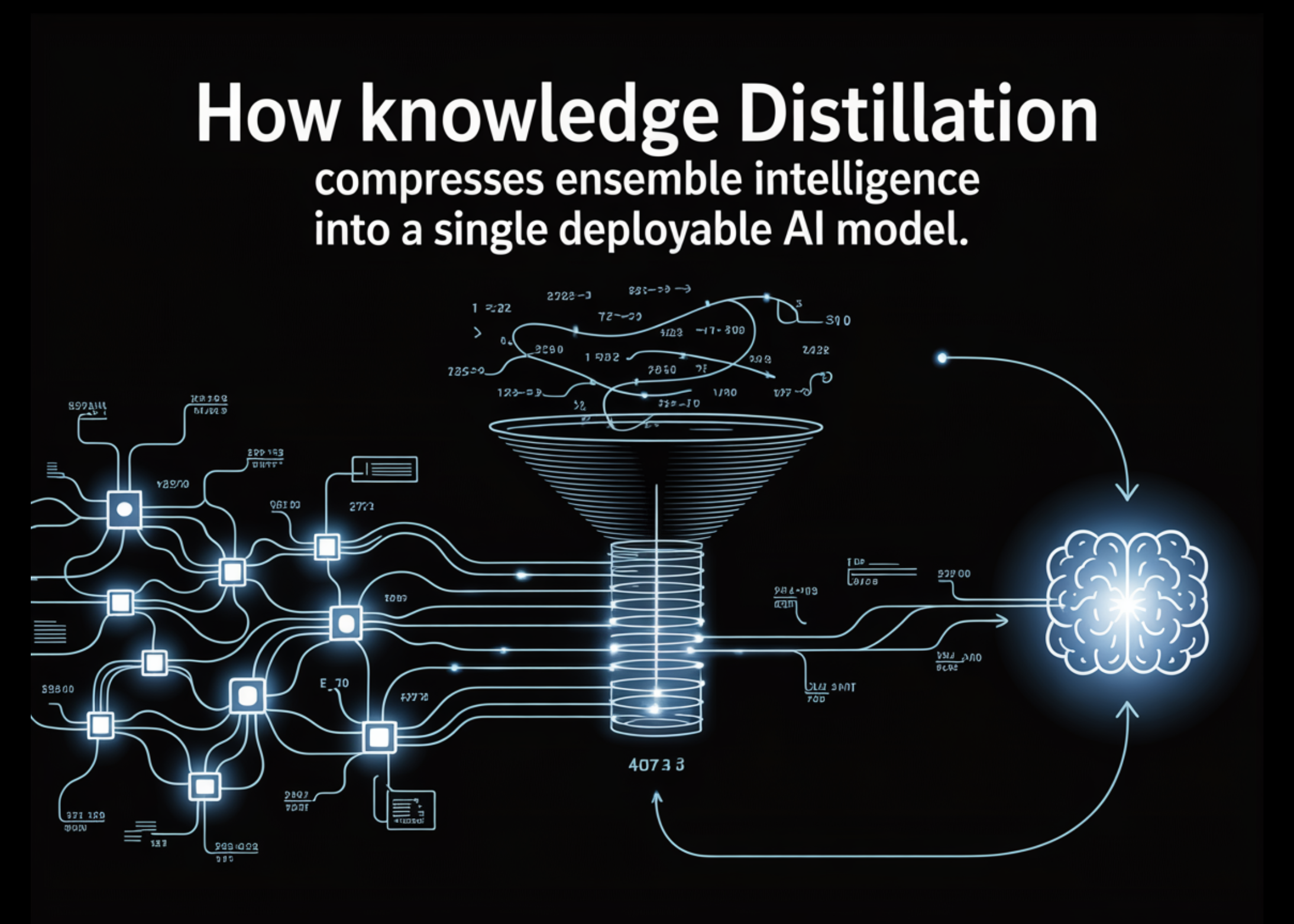

How Knowledge Distillation Compresses Ensemble Intelligence into a Single Deployable AI Model

Complex prediction problems often lead to ensembles because combining multiple models improves accuracy by reducing variance and capturing diverse patterns. However, these ensembles are impractical in production due to latency constraints and operational complexity. Instead of discarding them, Knowledge Distillation offers a smarter approach: keep the ensemble as a teacher and train a smaller student model using its soft probability outputs. This allows the student to inherit much of the ensemble’s performance while being lightweight and fast enough for deployment.

In this article, we build this pipeline from scratch — training a 12-model teacher ensemble, generating soft targets with temperature scaling, and distilling it into a student that recovers 53.8% of the ensemble’s accuracy edge at 160× the compression. Knowledge distillation is a model compression technique in which a large, pre-trained “teacher” model transfers its learned behavior to a smaller “student” model. Instead of training solely on ground-truth labels, the student is trained to mimic the teacher’s predictions—capturing not just final outputs but the richer patterns embedded in its probability distributions.

This approach enables the student to approximate the performance of complex models while remaining significantly smaller and faster. Originating from early work on compressing large ensemble models into single networks, knowledge distillation is now widely used across domains like NLP, speech, and computer vision, and has become especially important in scaling down massive generative AI models into efficient, deployable systems.

In the process, we create a synthetic dataset for a binary classification task, consisting of 5,000 samples with 20 features, some of which are informative and some redundant. The dataset is then split into training and testing sets to evaluate model performance on unseen data. Next, StandardScaler normalizes the features to have a consistent scale, which helps neural networks train more efficiently.

Two neural network architectures are defined: a TeacherModel and a StudentModel. The teacher represents one of the large models in the ensemble with multiple layers and wide architecture, making it highly expressive but computationally expensive during inference. The student model, on the other hand, is a smaller and more efficient network with fewer layers and parameters. Its goal is not to match the teacher’s complexity, but to learn its behavior through distillation.

Alibaba Introduces VimRAG: a New Framework for Multimodal RAG

Why Every AI Coding Assistant Needs a Memory Layer

Related articles

OpenProtein.AI provides biologists with protein design tools

OpenProtein.AI offers biologists tools for effective protein design.

OpenAI Unveils GPT-Rosalind: New AI for Life Sciences Research

OpenAI has introduced GPT-Rosalind, a new AI model to accelerate drug discovery and genomics research.

UC Berkeley and UCSF Researchers Use AI to Transform Medical Imaging

Researchers from UC Berkeley and UCSF are developing AI to improve medical imaging.