Alibaba Introduces VimRAG: a New Framework for Multimodal RAG

Retrieval-Augmented Generation (RAG) has become a standard technique for integrating large language models with external knowledge. However, when it comes to mixing text with images and videos, this approach starts to falter. Researchers at Alibaba's Tongyi Lab have introduced 'VimRAG', a framework specifically designed to address this issue.

Modern RAG agents follow a Thought-Action-Observation cycle, where the agent appends its entire interaction history into a single growing context. However, for tasks involving videos or visually rich documents, this quickly becomes impractical. The density of critical observations drops to zero as reasoning steps increase. In response, memory-based compression is employed, where the agent iteratively summarizes past observations into a compact state, helping to maintain information density.

In a pilot study comparing various memory strategies, graph-based memory significantly reduced redundant search actions. Another study tested four memory strategies, showing that selectively retaining only relevant visual tokens provided the best trade-off between information density and accuracy.

VimRAG's architecture consists of three components. The first is the Multimodal Memory Graph, which models the reasoning process as a dynamic directed acyclic graph. Each node encodes information about parent nodes, sub-queries, and visual tokens. The second component is Graph-Modulated Visual Memory Encoding, which treats token assignment as a resource allocation problem. The third component is Graph-Guided Policy Optimization, which enhances learning efficiency by excluding steps containing irrelevant information from updates.

VimRAG was evaluated across nine benchmarks, including HotpotQA and SQuAD, and demonstrated high effectiveness in complex multimodal understanding tasks. This new architecture promises to improve interactions with visual data, opening new horizons for the application of language models in challenging scenarios.

Creating a Pose2Sim Pipeline for Markerless 3D Kinematics

How Knowledge Distillation Compresses Ensemble Intelligence into a Single Deployable AI Model

Related articles

Google adds AI features to Chrome for saving workflows

Google adds a new Skills feature to Chrome for saving AI prompts.

Google launches Gemini's Personal Intelligence feature in India

Google launches Gemini's Personal Intelligence feature in India, allowing users to receive personalized answers.

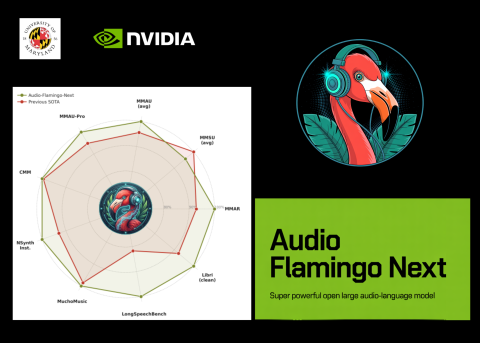

NVIDIA and University of Maryland Unveil Audio Flamingo Next

NVIDIA and University of Maryland unveiled Audio Flamingo Next, a powerful audio-language model for processing speech and sounds.