NVIDIA and University of Maryland Unveil Audio Flamingo Next

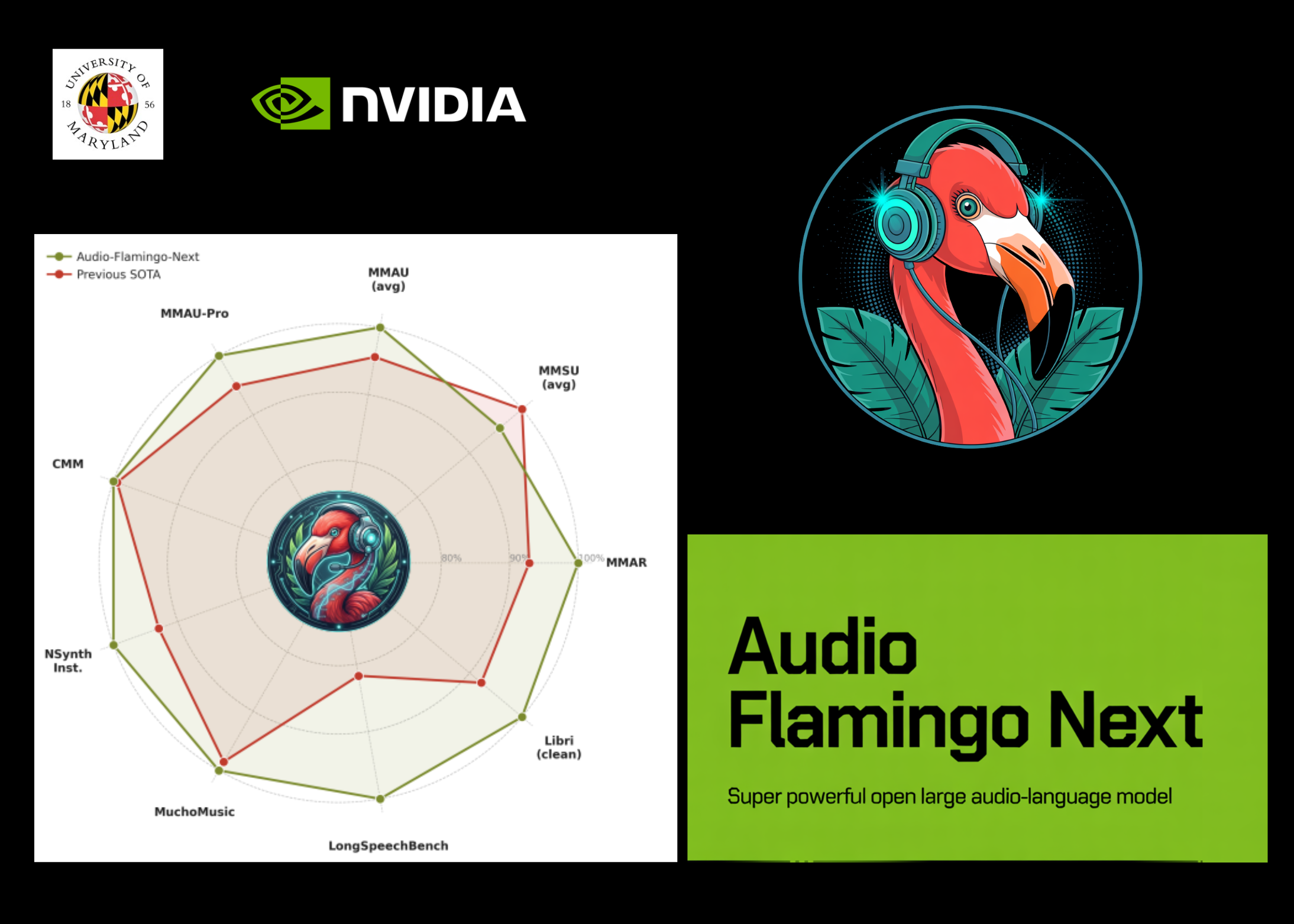

Understanding audio has always been the multimodal frontier that lags behind vision. While image-language models have rapidly scaled toward real-world deployment, building open models that robustly reason over speech, environmental sounds, and music — especially at length — has remained quite hard. NVIDIA and University of Maryland researchers have now taken a direct swing at that gap by releasing Audio Flamingo Next (AF-Next), the most capable model in the Audio Flamingo series and a fully open Large Audio-Language Model (LALM) trained on internet-scale audio data.

Audio Flamingo Next (AF-Next) comes in three specialized variants for different use cases: AF-Next-Instruct for general question answering, AF-Next-Think for advanced multi-step reasoning, and AF-Next-Captioner for detailed audio captioning. A Large Audio-Language Model (LALM) pairs an audio encoder with a decoder-only language model to enable question answering, captioning, transcription, and reasoning directly over audio inputs.

AF-Next is built around four main components: the AF-Whisper audio encoder, an audio adaptor, the LLM backbone, and a TTS module for voice-to-voice interaction. The AF-Whisper is a custom Whisper-based encoder further pre-trained on a larger and more diverse corpus, including multilingual speech and multi-talker ASR data, which processes audio input into a log mel-spectrogram.

A key aspect of AF-Next is the Temporal Audio Chain-of-Thought method, which allows the model to anchor each intermediate reasoning step to a timestamp in the audio, encouraging accurate evidence aggregation and reducing hallucination over long recordings. To train this capability, the research team created the AF-Think-Time dataset, consisting of question-answer-thinking-chain triplets curated from challenging audio sources.

The final training dataset comprises approximately 108 million samples and around 1 million hours of audio, drawn from both existing publicly released datasets and raw audio collected from the open internet. Training followed a four-stage curriculum, each with distinct data mixtures and context lengths, resulting in the release of models like AF-Next-Captioner, AF-Next-Instruct, and AF-Next-Think, tailored for various tasks and real-world applications.

Building a Multi-Agent Data Analysis System with Google ADK

Scotiabank launches AI framework to optimize operations

Related articles

Google launches native Gemini app for Mac

Google launches a native Gemini app for Mac, enabling users to get instant help.

Anthropic's redesigned Claude Code app and new business features

Anthropic has launched a redesigned Claude Code app and Routines feature, transforming the development approach.

Google Introduces Gemini 3.1 Flash TTS with Enhanced Speech and Control

Google announced Gemini 3.1 Flash TTS with enhanced speech and control.