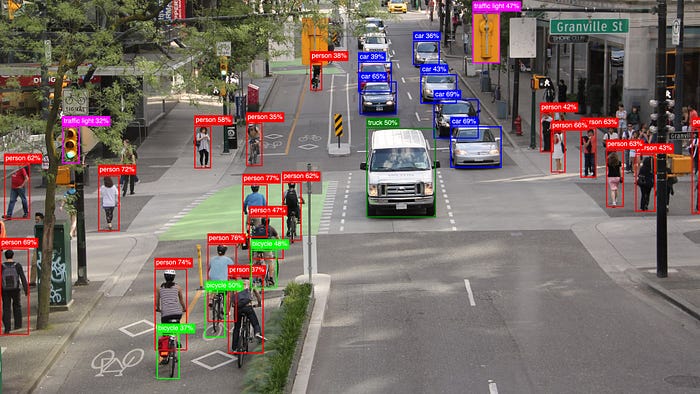

Exploring the Evolution of YOLO in Computer Vision

Why is everyone obsessed with YOLO? And I’m not talking about the 2012 mantra “You Only Live Once.” For years, computers struggled to “see” the world. Object detection, the task of finding and identifying objects in images, was slow and complex. Traditional models used a multi-step process: they scanned an image, proposed regions, and then classified those regions. This was accurate but painfully slow.

This article delves into the evolution of the YOLO (You Only Look Once) object detection model, detailing its journey from YOLOv1 through to the latest YOLO26. It discusses key innovations, including real-time detection, improvements for small objects, and the introduction of specialized modules aimed at enhancing performance across various applications. Ultimately, it showcases how these advancements can be leveraged in practical scenarios.

Exploring Anthropic's Code Leak: What They Were Hiding

Microsoft Unveils Three New AI Models to Compete

Related articles

NVIDIA introduces AITune: a toolkit for optimizing PyTorch model inference

NVIDIA has introduced AITune, a tool for automating the optimization of PyTorch models.

OSGym: a new framework for managing 1,000+ OS replicas

OSGym is a new framework for training AI agents operating with OS, developed by researchers from MIT and other universities.

Implementation Guide for ModelScope in Model Search and Evaluation

Exploring ModelScope through a practical workflow on Colab.