Maximize AI Factory Efficiency to Boost Revenue

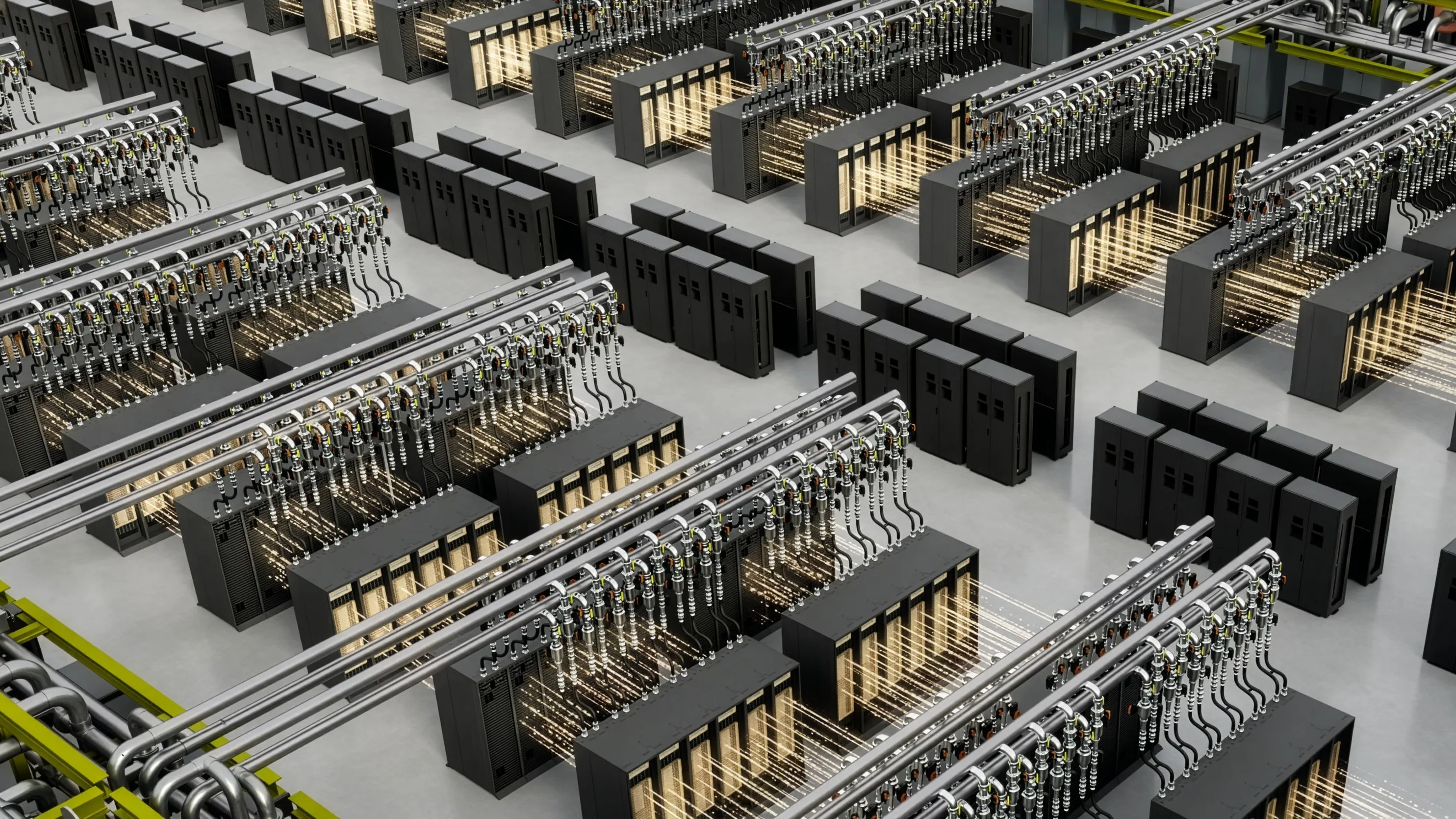

In the AI era, power is the ultimate constraint, and every AI factory operates within a hard limit. This makes performance per watt—the rate at which power is converted into revenue-generating intelligence—the defining metric for modern AI infrastructure. AI data centers now operate as token factories tied directly to the energy ecosystem, where access to land, power, and infrastructure determines deployment, and efficiency determines output. Increasing revenue within a fixed power envelope depends entirely on maximizing intelligence per watt across AI infrastructure and across the five-layer AI cake ecosystem. This article walks through how NVIDIA architectures, systems, and AI factory software maximize performance per watt at every layer of the stack, and how those efficiency gains translate into higher token throughput and revenue per megawatt.

NVIDIA architectures and platforms are engineered to increase the amount of intelligence produced per watt with each generation. Across six architecture generations, NVIDIA has improved inference throughput per megawatt by 1,000,000x. To put this in perspective, if the average fuel efficiency of a car had improved as swiftly as chips over a similar time period, one gallon of gas would suffice for a trip to the moon and back. NVIDIA Hopper introduced many architecture innovations that significantly increased energy efficiency over the prior generation. Key to these gains is the Hopper Transformer Engine, which combines fourth-generation Tensor Core technology with FP8 acceleration and software to dramatically increase performance per watt. NVIDIA Blackwell advanced this foundation with improvements across high-bandwidth memory (HBM), NVIDIA NVLink switch and fabric, and NVFP4-enabled Tensor Cores, increasing throughput per watt.

Recent SemiAnalysis InferenceX data shows that NVIDIA software optimizations and NVIDIA Blackwell Ultra GB300 NVL72 systems deliver up to 50x higher throughput per megawatt and 35x lower token cost than Hopper for DeepSeek-R1. The NVIDIA Vera Rubin platform further boosts efficiency. Rubin GPUs, Vera CPUs, NVLink 6, and full‑rack thermals are co-designed as a single AI factory platform. Notably, the NVIDIA Vera CPU delivers 2x efficiency and 50% higher performance compared to traditional CPUs. This end-to-end approach enables up to 10x higher inference throughput per megawatt and about 10x lower token cost versus Blackwell for AI factories for Kimi K2 (32K/8K).

These efficiency gains are evident in AI workloads and are also reflected in broader measures of compute performance. The HPC and supercomputing community uses the Green500 benchmark to measure high-precision (FP64) efficiency, and NVIDIA supercomputing systems top the leadership board, with nine of the top ten systems accelerated by NVIDIA technologies. Achieving these massive efficiency gains over architecture generations requires designing efficiency into every layer of the stack. NVIDIA approaches this as an extreme co-design problem—optimizing from chip design and manufacturing, through system-level innovations like liquid cooling, to AI factory orchestration.

Efficiency begins before silicon reaches the AI factory. NVIDIA is optimizing the manufacturing pipeline itself to deliver more energy-efficient chips, faster. For example, the NVIDIA cuLitho library for accelerated computational lithography re‑implements the core primitives of computational lithography on GPUs. It accelerates mask synthesis by up to 70x and allows a few hundred NVIDIA DGX-class systems to replace tens of thousands of CPU servers. In practice, this means moving from two-week photomask cycles to overnight runs, using about one-ninth the power and one-eighth the physical footprint, while enabling advanced techniques like inverse lithography and curvilinear masks.

Design Protein Binders with NVIDIA's Proteina-Complexa

Build NVIDIA Nemotron 3 Agents for Safe and Natural Interactions

Related articles

Five AI Compute Architectures Every Engineer Should Know

Explore five key compute architectures for AI that are crucial for engineers.

Google and Intel deepen AI infrastructure partnership

Google and Intel strengthen their collaboration in AI by expanding their partnership for processor and infrastructure development.

Efficient Management of AI Workloads on Supercomputers

Overview of managing AI workloads on NVIDIA supercomputers using Mission Control.