NVIDIA Accelerates Gemma 4 for Local Agentic AI

Open models are driving a new wave of on-device AI, extending innovation beyond the cloud to everyday devices. As these models advance, their value increasingly depends on access to local, real-time context that can turn meaningful insights into action. Designed for this shift, Google’s latest additions to the Gemma 4 family introduce a class of small, fast and omni-capable models built for efficient local execution across a wide range of devices.

Google and NVIDIA have collaborated to optimize Gemma 4 for NVIDIA GPUs, enabling efficient performance across a range of systems — from data center deployments to NVIDIA RTX-powered PCs and workstations, the NVIDIA DGX Spark personal AI supercomputer, and NVIDIA Jetson Orin Nano edge AI modules. The latest additions to the Gemma 4 family, spanning E2B, E4B, 26B and 31B variants, are designed for efficient deployment from edge devices to high-performance GPUs.

All configurations were measured using Q4_K_M quantizations. This new generation of compact models supports a range of tasks, including strong performance on complex problem-solving tasks, code generation and debugging for developer workflows, and native support for structured tool use. The E2B and E4B models are built for ultra-efficient, low-latency inference at the edge, running completely offline with near-zero latency across many devices including Jetson Nano modules.

The 26B and 31B models are designed for high-performance reasoning and developer-centric workflows, making them well suited for agentic AI. Optimized to deliver state-of-the-art, accessible reasoning, these models run efficiently on NVIDIA RTX GPUs and DGX Spark — powering development environments, coding assistants, and agent-driven workflows.

As local agentic AI continues to gain momentum, applications like OpenClaw are enabling always-on AI assistants on RTX PCs, workstations, and DGX Spark. The latest Gemma 4 models are compatible with OpenClaw, allowing users to build capable local agents that draw context from personal files, applications, and workflows to automate tasks.

Microsoft Unveils Three New AI Models to Compete

Achieving Single-Digit Microsecond Latency for Financial Markets

Related articles

Google adds AI features to Chrome for saving workflows

Google adds a new Skills feature to Chrome for saving AI prompts.

Google launches Gemini's Personal Intelligence feature in India

Google launches Gemini's Personal Intelligence feature in India, allowing users to receive personalized answers.

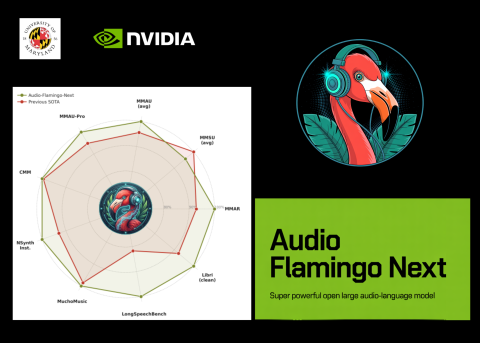

NVIDIA and University of Maryland Unveil Audio Flamingo Next

NVIDIA and University of Maryland unveiled Audio Flamingo Next, a powerful audio-language model for processing speech and sounds.