Optimizing Context for AI Agents: A Deep Dive

Optimizing context is a crucial task for AI agents, as it directly impacts their performance. Real-world failures often stem not from model capabilities but from how context is constructed, transmitted, and maintained. This is a complex issue that requires ongoing exploration and adaptation of methods. Through my experience in building multi-agent systems, I have observed that performance relies much more on the precision of context shaping than on its volume.

Context engineering is the art of providing the right information and tools for task completion. Effective context engineering involves finding the smallest set of high-signal tokens that ensure the highest probability of a positive outcome. In practice, this boils down to several key steps: offloading information to external systems, dynamically retrieving data, isolating context, and reducing history while maintaining necessary information for future actions.

One common failure mode is context pollution, where excessive and conflicting information distracts the model. Another issue is context rot, where a model's performance degrades as the context window fills, even if it remains within established limits. This occurs due to the model's limited ability to retain information and allocate attention among tokens, leading to a decline in quality.

Context compaction serves as a solution to context rot, where the model summarizes its contents and initiates a new context window with the previous summary. This is particularly useful for long-running tasks. However, the difficulty lies in determining which information should remain. It is essential to preserve facts that will influence future actions; otherwise, the summary becomes useless for the agent.

A model is not the same as an agent. A critical aspect is how context is assembled and maintained, including the rules governing the preservation of information between steps. Many so-called 'model failures' are actually failures in the system that could not retain the necessary state or repeated work due to a lack of information about previous errors.

Boomi identifies data activation as key to successful AI deployment in 2026

AI Investments: Private Wealth Bypasses Venture Capital Firms

Related articles

Google adds AI features to Chrome for saving workflows

Google adds a new Skills feature to Chrome for saving AI prompts.

Google launches Gemini's Personal Intelligence feature in India

Google launches Gemini's Personal Intelligence feature in India, allowing users to receive personalized answers.

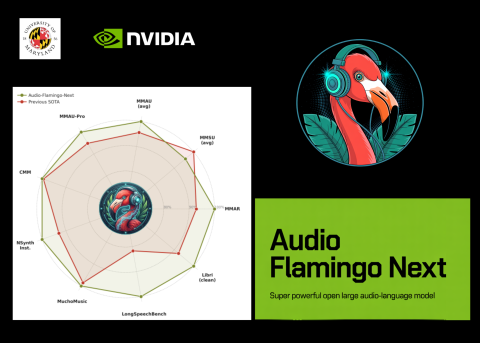

NVIDIA and University of Maryland Unveil Audio Flamingo Next

NVIDIA and University of Maryland unveiled Audio Flamingo Next, a powerful audio-language model for processing speech and sounds.