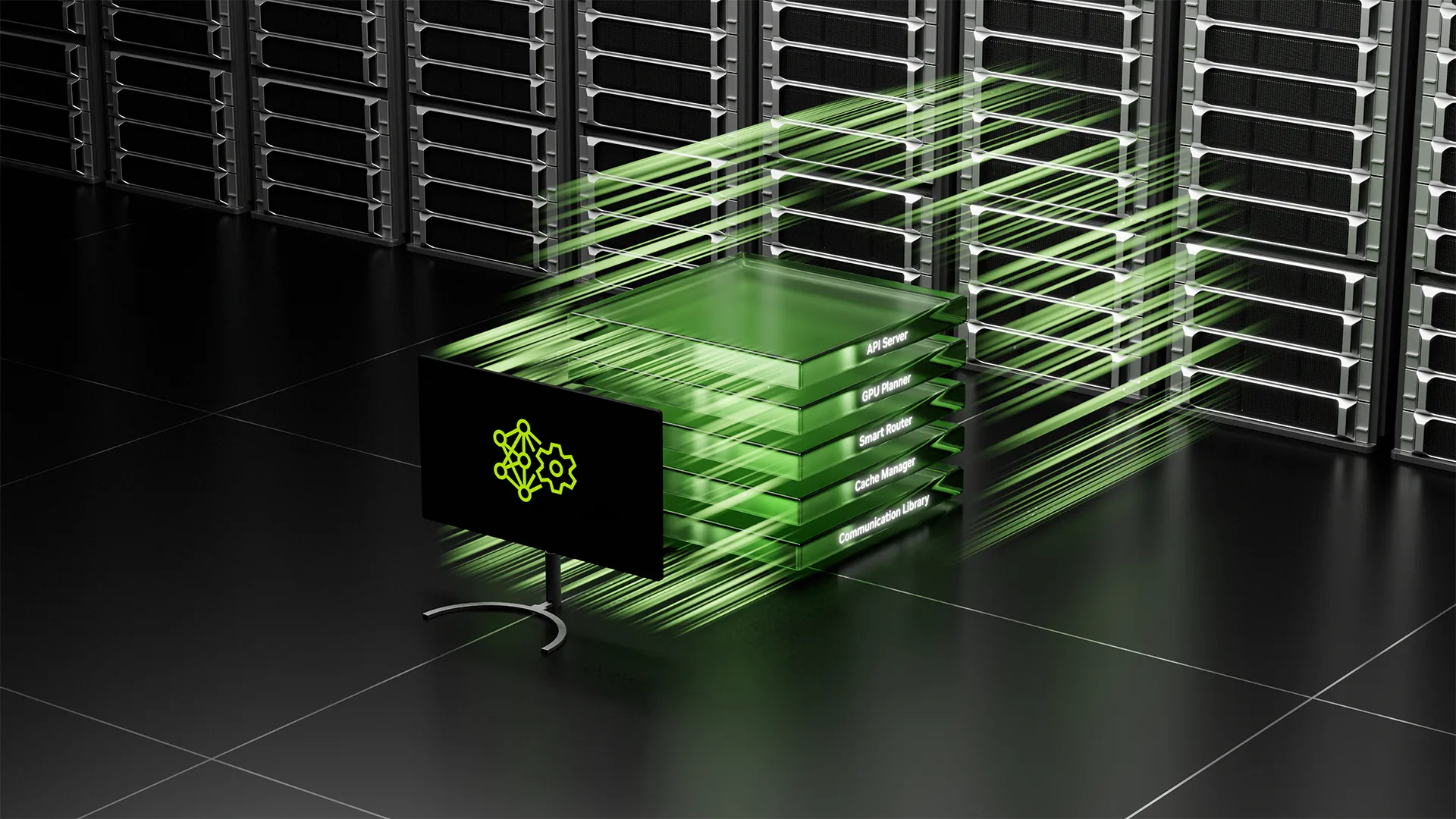

Deploying Disaggregated LLM Inference Workloads on Kubernetes

As large language model (LLM) inference workloads grow in complexity, traditional monolithic serving processes start to hit their limits. The prefill and decode stages have fundamentally different compute profiles, yet traditional deployments force them to run on the same hardware, leading to underutilized GPUs and inflexible scaling. Disaggregated serving addresses this by splitting the inference pipeline into distinct stages such as prefill, decode, and routing, each running as an independent service that can be resourced and scaled on its own terms.

This article explores how disaggregated inference is deployed on Kubernetes, examines various ecosystem solutions and their execution on a cluster, and evaluates what they provide out of the box. Before diving into Kubernetes manifests, it's helpful to understand the two inference deployment modes for LLMs: in aggregated serving, a single process (or tightly coupled group of processes) handles the entire inference lifecycle from input to output. Disaggregated serving separates the pipeline into distinct stages that operate as independent services.

In a traditional aggregated setup, a single model server (or coordinated group of servers in a parallel configuration) handles the full request lifecycle. A user prompt comes in, the server tokenizes it, runs prefill to build context, generates output tokens autoregressively (decodes), and returns the response. Everything happens in a single process or tightly coupled pod group. This is conceptually simple and works well for many use cases, but it leads to alternating between two fundamentally different workloads on the hardware.

Disaggregated architectures separate these stages into distinct services: prefill workers process the input prompt, which is compute-heavy. You want to maximize your GPUs for high throughput and can parallelize aggressively. Decode workers generate output tokens one at a time, which is memory-bandwidth-bound due to the autoregressive nature of LLMs. The router manages incoming requests, handles Key-Value (KV) cache routing between prefill and decode stages, and balances the load of requests across your workers.

Why disaggregate? Three reasons stand out: different resource and optimization profiles per stage, independent scaling, and better GPU utilization. By disaggregating, you can match GPU resources, model sharding techniques, and batch sizes to each stage's needs rather than compromise on a single approach. Independent scaling allows you to respond to actual demand, while separating stages lets each saturate its target resource more effectively.

Deploying a multi-pod inference workload (either model-parallel aggregated models or disaggregated models) is only half the story. How the scheduler places pods across the cluster directly impacts performance. Understanding how to orchestrate it becomes key to achieving high multi-pod inference performance on Kubernetes. Three scheduling capabilities matter most: gang scheduling, hierarchical gang scheduling, and topology-aware placement. These three capabilities determine how an AI scheduler, such as KAI Scheduler, places pods based on the application's scheduling constraints.

Build a Zero-Trust Architecture for Confidential AI Factories

Build Deep Agents for Enterprise Search with NVIDIA AI-Q

Related articles

Google launches Gemini's Personal Intelligence feature in India

Google launches Gemini's Personal Intelligence feature in India, allowing users to receive personalized answers.

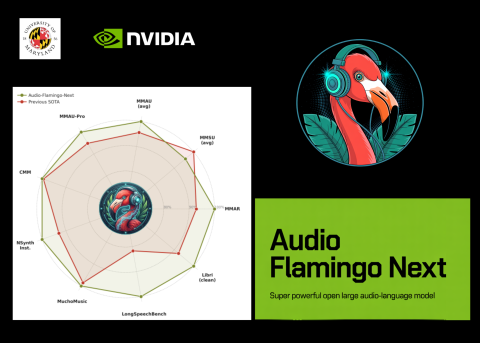

NVIDIA and University of Maryland Unveil Audio Flamingo Next

NVIDIA and University of Maryland unveiled Audio Flamingo Next, a powerful audio-language model for processing speech and sounds.

Users report performance degradation of Anthropic's Claude models

Users report performance degradation of Claude models by Anthropic, sparking discussions about product quality.