Creating a Tiny Computer Inside a Transformer for Program Execution

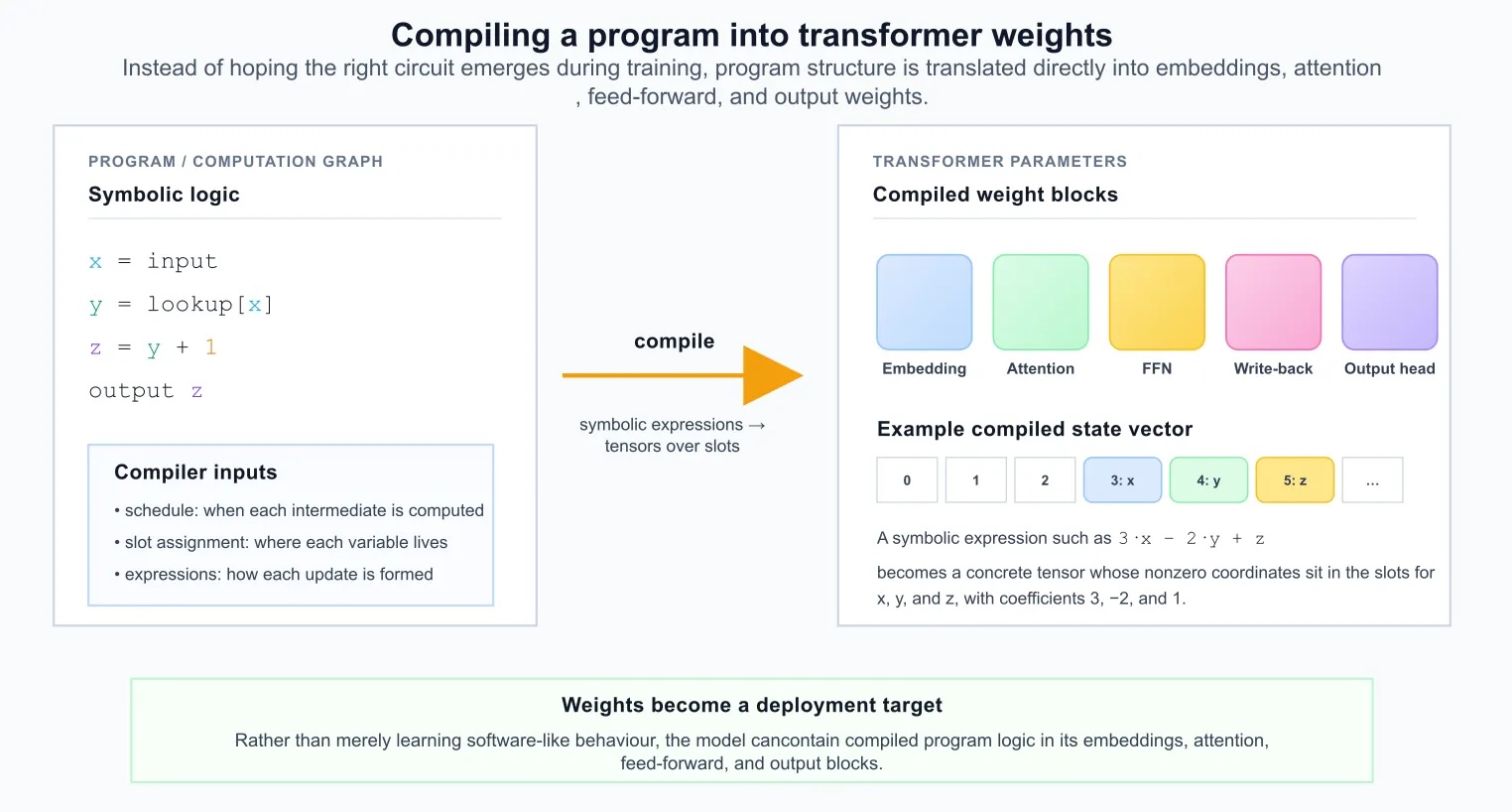

In a recent project, researchers have developed a concept that allows for the creation of a tiny computer within a transformer by compiling a simple program directly into the model's weights. This idea revolves around not relying on training weights from data but rather analytically constructing them so that the model can execute a computation graph directly.

Within this approach, the transformer is treated as a programmable machine, where a schedule specifies which intermediate quantities should be computed at each step. Hidden dimensions are assigned to variables akin to registers in a tiny computer, and attention mechanisms are wired to perform lookup and routing operations. As a result, the transformer begins to resemble a small compiled computer built from attention blocks and linear projections.

One of the key aspects of this work is the ability to create an internal deterministic mode that allows the model to perform precise computations without leaving its execution loop. In one regime, the model behaves like a flexible language system, while in another, it functions as a compiled machine reliably executing a fixed computation graph.

A comparison with Percepta's work, which also focuses on executing programs inside transformers, highlights that this approach creates a more specialized structure. Instead of embedding an interpreter in the weights, the target program is compiled directly into the weights, making the model less general but simpler and more transparent for understanding deterministic computations.

Later, the author illustrates this approach with a small program that includes lookup operations, local computations, and output. The program evolves with each step, updating its state and emitting results.

MiniMax Launches MMX-CLI: A Command-Line Interface for AI Agents

Increasing Enterprise Governance Challenges with Edge AI Workloads

Related articles

Applying Claude Code to Non-technical Tasks on Your Computer

Claude Code helps efficiently tackle non-technical tasks on your computer.

Effective reward functions for customizing Amazon Nova with AWS Lambda

How to effectively utilize AWS Lambda for customizing Amazon Nova models.

Model Drift: Understanding and Fixing Performance Issues Over Time

Model drift refers to the deterioration of predictive model performance over time. Learn how to detect and fix it.