Building a Universal Long-Term Memory Layer for AI Agents

In this tutorial, we build a universal long-term memory layer for AI agents using Mem0, OpenAI models, and ChromaDB. We design a system that can extract structured memories from natural conversations, store them semantically, retrieve them intelligently, and integrate them directly into personalized agent responses. We move beyond simple chat history and implement persistent, user-scoped memory with full CRUD control, semantic search, multi-user isolation, and custom configuration.

Finally, we construct a production-ready memory-augmented agent architecture that demonstrates how modern AI systems can reason with contextual continuity rather than operate statelessly. We install all required dependencies and securely configure our OpenAI API key, initializing the Mem0 Memory instance along with the OpenAI client and Rich console utilities.

We establish the foundation of our long-term memory system with the default configuration powered by ChromaDB and OpenAI embeddings. We simulate realistic multi-turn conversations and store them using Mem0’s automatic memory extraction pipeline, adding structured conversational data for a specific user and allowing the LLM to extract meaningful long-term facts.

We verify how many memories are created, confirming that semantic knowledge is successfully persisted. We perform semantic search queries to retrieve relevant memories using natural language, demonstrating how Mem0 ranks stored memories by similarity score and returns the most contextually aligned information.

We also perform CRUD operations by listing, updating, and validating stored memory entries. As a result, we create a chat with memory, where the AI assistant uses long-term memories about the user to provide context-aware and personalized responses.

Meta Researchers Introduce Hyperagents for Self-Improving AI

Building Multi-Agent AI Systems with SmolAgents and Dynamic Orchestration

Related articles

OpenAI unveils GPT-Rosalind to accelerate life sciences research

OpenAI has introduced GPT-Rosalind, a model to accelerate life sciences research.

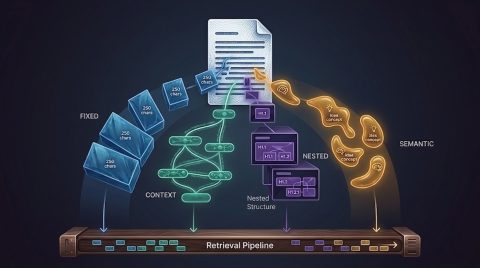

Error in RAG: How Incorrect Data Chunking Affects Outcomes

Incorrect data chunking can lead to system errors, reducing user trust.

Google launches new AI Mode for side-by-side web searching

Google has introduced a new AI Mode for side-by-side web searching.