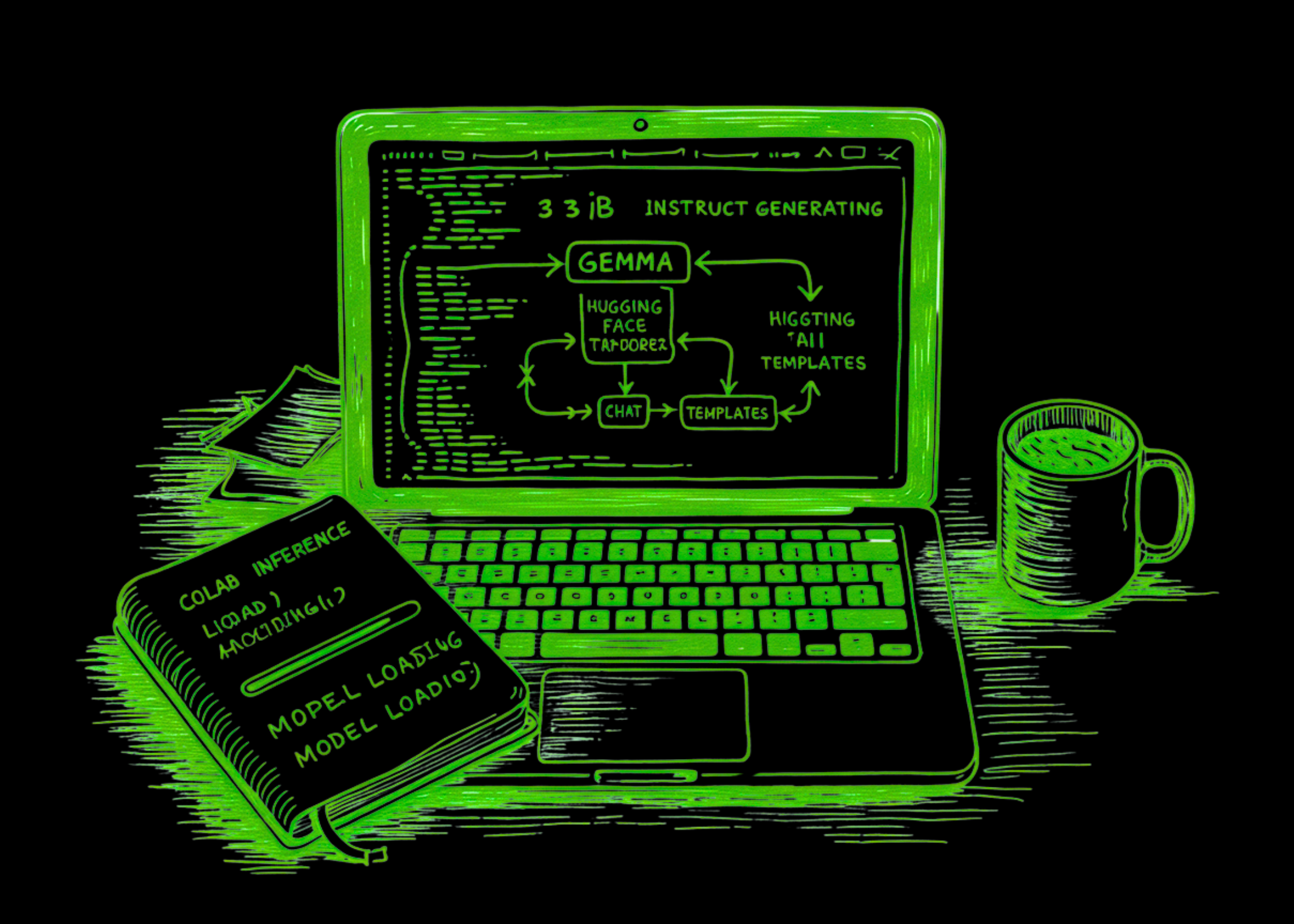

Build a Production-Ready Gemma 3 1B Instruct Workflow

This tutorial walks you through building and running a Colab workflow for Gemma 3 1B Instruct using Hugging Face Transformers and HF Tokens. We start by installing the required libraries, securely authenticating with our Hugging Face token, and loading the tokenizer and model onto the available device with the correct precision settings. From there, we create reusable generation utilities, format prompts in a chat-style structure, and test the model across multiple realistic tasks such as basic generation, structured JSON-style responses, prompt chaining, benchmarking, and deterministic summarization. This approach ensures we not only load Gemma but also interact with it meaningfully.

We set up the environment needed to run the tutorial smoothly in Google Colab. We install the required libraries, import all core dependencies, and securely authenticate with Hugging Face using our token. By the end of this part, we will have prepared the notebook to access the Gemma model and continue the workflow without manual setup issues.

Next, we configure the runtime by detecting whether we are using a GPU or a CPU and selecting the appropriate precision to load the model efficiently. We define the Gemma 3 1B Instruct model path and load both the tokenizer and the model from Hugging Face. At this stage, we complete the core model initialization, making the notebook ready to generate text.

We build reusable functions that format prompts into the expected chat structure and handle text generation from the model. This modular approach allows us to reuse the same function across different tasks in the notebook. After that, we run a first practical generation example to confirm that the model is working correctly and producing meaningful output.

We then push the model beyond simple prompting by testing structured output generation and prompt chaining. We ask Gemma to return a response in a defined JSON-like format and then use a follow-up instruction to transform an earlier response for a different audience. This helps us see how the model handles formatting constraints and multi-step refinement in a realistic workflow.

Transform AI with the Together AI Kernels Team

DeepL: 83% of Companies Lag in Adopting Language AI

Похожие статьи

Maximize AI Infrastructure Throughput with GPU Workload Consolidation

Optimize GPU usage in Kubernetes to enhance AI efficiency.

Accelerate Token Production in AI Factories with NVIDIA Mission Control

NVIDIA Mission Control 3.0 enhances token production in AI factories.

NVIDIA Sets New MLPerf Records with Co-Designed Solutions

NVIDIA sets new records in MLPerf, enhancing AI factory performance.