Introducing DSGym: A New Framework for Evaluating Data Science Agents

Current data science benchmarks face issues with incompatible evaluation interfaces. Many tasks can be solved without utilizing the underlying data, creating additional challenges. We introduce DSGym, an integrated framework for evaluating and training data science agents in self-contained execution environments. Using DSGym, we trained a state-of-the-art open-source data science agent.

Data science serves as the computational engine of modern scientific discovery. However, evaluating and training LLM-based data science agents remains challenging, as existing benchmarks assess isolated skills in heterogeneous execution environments, complicating integration and fair comparisons. DSGym offers a unified platform that integrates diverse data science evaluation suites behind a single API with standardized abstractions for datasets, agents, and metrics.

DSGym not only unifies and refines existing benchmarks but also expands their scope with novel scientific analysis tasks (90 bioinformatics tasks from academic literature) and challenging end-to-end modeling competitions (92 Kaggle competitions). Additionally, DSGym provides trajectory generation and synthetic query pipelines for agent training, which we demonstrated by training a 4B model on 2,000 generated examples, achieving state-of-the-art performance among open-source models.

One of DSGym's main contributions is the abstraction of code execution complexity behind containers that can be allocated in real time for safe code execution. These containers come with pre-installed dependencies and data available for processing. DSGym provides a unified JSON interface for all benchmarks, where each task is expressed as data files, query prompts, evaluation metrics, and metadata. We strive to make the design modular and straightforward, making it easier for users to add new tasks, agent scaffolds, tools, and evaluation scripts.

The tasks in DSGym are categorized into two primary tracks: Data Analysis (query-answering via programmatic analysis) and Data Prediction (end-to-end ML pipeline development). In addition to integrating established benchmarks like MLEBench and QRData, DSGym introduces original datasets, creating two novel suites: DSBio and DSPredict. These suites cover a wide range of tasks and can effectively train models by generating synthetic queries.

Our results demonstrate that these data can be an effective way to improve model performance on data science tasks, even for small models. We also conducted an error analysis to understand why models fail on tasks, revealing interesting patterns that will aid in the further development of the framework.

Accelerate Attention with FlashAttention-3: New Capabilities and Performance

Together AI Enhances Fine-Tuning Service with Tool Support

Похожие статьи

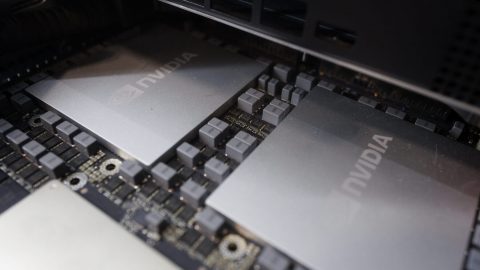

Maximize AI Infrastructure Throughput with GPU Workload Consolidation

Optimize GPU usage in Kubernetes to enhance AI efficiency.

Accelerate Token Production in AI Factories with NVIDIA Mission Control

NVIDIA Mission Control 3.0 enhances token production in AI factories.

NVIDIA Sets New MLPerf Records with Co-Designed Solutions

NVIDIA sets new records in MLPerf, enhancing AI factory performance.