Build Model Optimization Pipeline with NVIDIA Model Optimizer

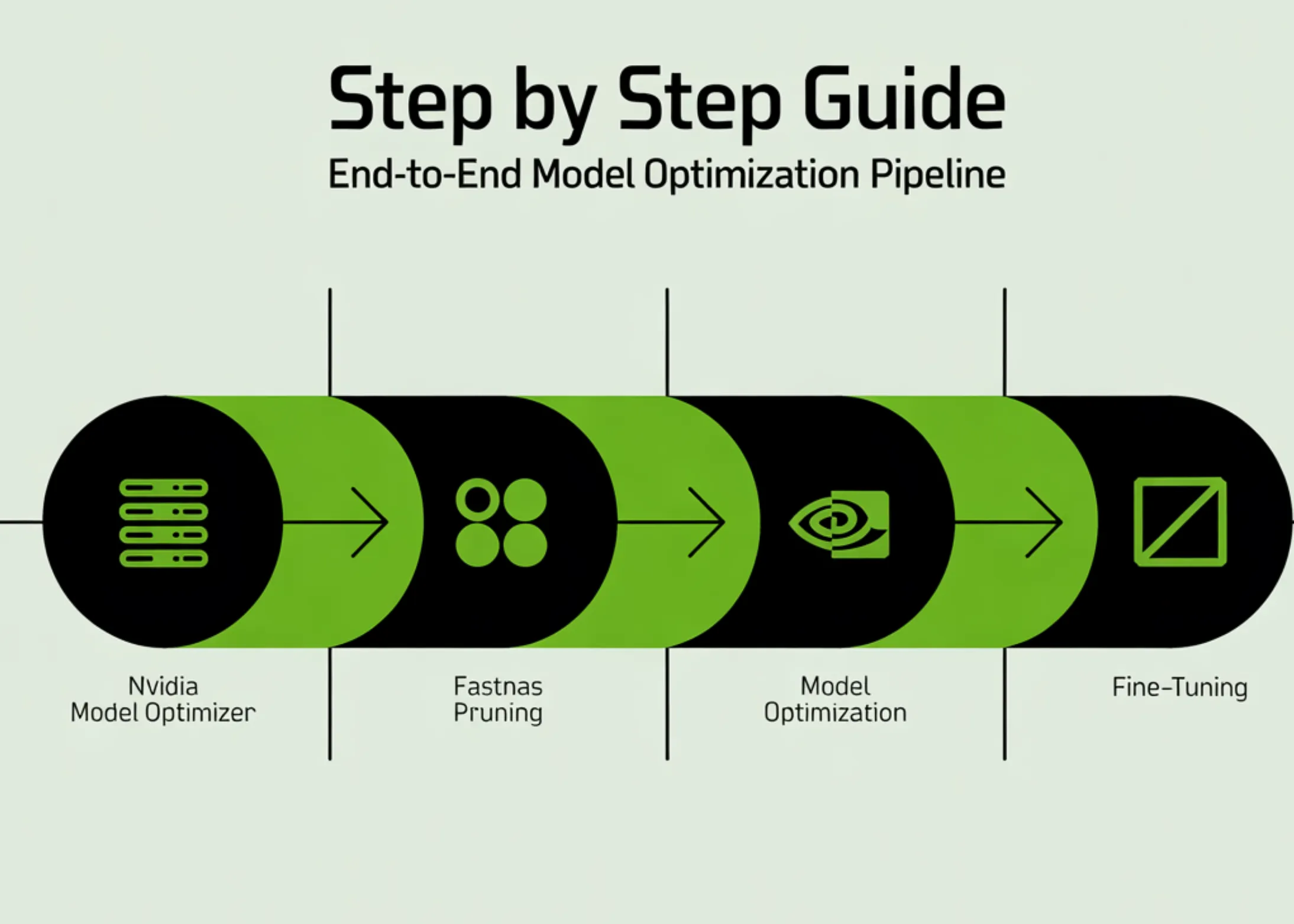

This tutorial demonstrates how to create a complete end-to-end optimization pipeline using NVIDIA Model Optimizer to train, prune, and fine-tune a deep learning model directly in Google Colab. We start by setting up the environment and preparing the CIFAR-10 dataset, then define a ResNet architecture and train it to establish a strong baseline. After that, we apply FastNAS pruning to systematically reduce the model's complexity under FLOPs constraints while preserving performance.

We also address real-world compatibility issues, restore the optimized subnet, and fine-tune it to recover accuracy. By the end, we have a fully functioning workflow that takes a model from training to deployment-ready optimization, all within a single streamlined setup.

We begin by installing all required dependencies and importing the necessary libraries to set up our environment. We initialize seeds to ensure reproducibility and configure the device to leverage a GPU if available. We also define key runtime parameters, such as batch size, epochs, dataset subsets, and FLOP constraints, to control the overall experiment.

The full data pipeline is constructed by preparing CIFAR-10 datasets with appropriate augmentations and normalization. We split the dataset to reduce its size and speed up experimentation. We then create efficient data loaders that ensure proper batching, shuffling, and reproducible data handling.

With this guide, users will be able to effectively utilize NVIDIA Model Optimizer to build and optimize their deep learning models, significantly enhancing performance and accelerating the deployment process.

TII Unveils Falcon Perception: A New Transformer for Image Processing

Replaced Vector DBs with Google's Memory Agent for Obsidian Notes

Похожие статьи

Replaced Vector DBs with Google's Memory Agent for Obsidian Notes

I replaced vector databases with Google's memory agent for Obsidian notes, enhancing memory management.

Achieving Single-Digit Microsecond Latency for Financial Markets

NVIDIA achieved record single-digit microsecond latencies for LSTM models in financial markets.

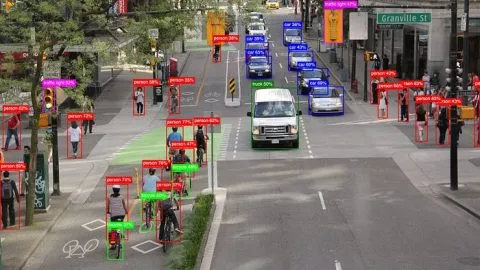

Exploring the Evolution of YOLO in Computer Vision

Explore the evolution of the YOLO model in computer vision and its key innovations.