How a Model 10,000× Smaller Can Outsmart ChatGPT

For the last decade, the entire AI industry has adhered to one unspoken convention: intelligence can only emerge at scale. We convinced ourselves that in order for models to truly mimic human reasoning, we needed larger and deeper networks. This led to stacking more transformer blocks, adding billions of parameters, and training across data centers that require megawatts of power. But does this race for bigger models blind us to a more efficient path? What if true intelligence is not related to the size of the model, but rather to how long it can reason? Can a tiny network, given the freedom to iterate on its own solution, outsmart a model thousands of times its size?

To understand why we need a new approach, we must first examine why our current reasoning models like GPT-4 and Claude still struggle with complex logic. These models are primarily trained on the Next-Token Prediction (NTP) objective. They process prompts through their billion-parameter layers to predict the next token in a sequence. Even when they employ the “Chain-of-Thought” (CoT) method, they are merely predicting a word, which unfortunately does not equate to thinking. This approach has two flaws. The first is its brittleness. Because the model generates answers token-by-token, a single mistake early in the reasoning process can snowball into a completely different—and often incorrect—answer. The model lacks the ability to stop, backtrack, and correct its internal logic before responding. It must fully commit to the path it started on, often hallucinating confidently just to finish a sentence. The second issue is that modern reasoning models rely on memorization rather than logical deduction. They perform well on unseen tasks because they likely have encountered similar problems in their vast training data. However, when faced with a novel problem, such as those in the ARC-AGI benchmark, their massive parameter counts become useless.

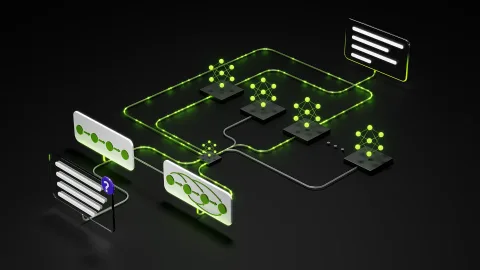

The Tiny Recursion Model (TRM) offers a new approach by breaking down the reasoning process into a compact and cyclical one. Unlike traditional transformer networks, which are feed-forward architectures requiring a single pass from input to output, TRM operates like a recursive machine with a small single MLP module that can iteratively improve its output. This allows it to outperform the best current mainstream reasoning models while being under 7 million parameters in size. To understand how this network efficiently solves problems, let’s explore its architecture from input to solution.

In standard LLMs, the only “state” is the KV cache of the conversation history. In contrast, TRM maintains three distinct information vectors that feed into each other: The Immutable Question (x), which is the original problem and never updated; The Current Hypothesis (yt), which is the model’s current “best guess” at the answer; and The Latent Reasoning (zn), which contains the abstract “thoughts” or intermediate logic used to derive the answer. At the heart of TRM is a single, tiny neural network, often just two layers deep. The reasoning process consists of a nested loop comprising two distinct stages: Latent Reasoning and Answer Refinement.

Initially, the model is tasked with purely thinking. It takes the current state and runs a recursive loop to update its internal understanding of the problem. Once the latent reasoning loop is complete, the model attempts to project these insights into its answer state. This creates a powerful feedback loop, allowing the model to refine its output over multiple iterations. Thus, TRM demonstrates that smaller models can be not only efficient but also more intelligent if given the opportunity to think and correct their answers.

Optimize UC Berkeley's Machine Learning Course for the AI Age

Salesforce unveils AI-enhanced Slack with 30 new features

Похожие статьи

Create hyper-personalized viewing experiences with AI assistant

Create hyper-personalized viewing experiences with Amazon Nova Sonic 2.0.

Build NVIDIA Nemotron 3 Agents for Safe and Natural Interactions

NVIDIA introduces Nemotron 3 models for creating safe and intelligent agents with high efficiency.

Deploying Disaggregated LLM Inference Workloads on Kubernetes

Explore disaggregated LLM deployment on Kubernetes for resource optimization.