Meta Unveils Muse Spark: A Multimodal Reasoning Model

Meta Superintelligence Labs has recently unveiled Muse Spark, the first model in the Muse family. Muse Spark is a natively multimodal reasoning model that supports tool use, visual chain of thought, and multi-agent orchestration.

When Meta describes Muse Spark as 'natively multimodal,' it means that the model was trained from the ground up to process and reason across both text and visual inputs simultaneously, rather than simply adding a vision module to a language model afterwards. Muse Spark is built to integrate visual information across domains and tools, achieving strong performance on visual STEM questions, entity recognition, and localization.

On the ScreenSpot Pro benchmark, which tests screenshot localization, Muse Spark scores 72.2 (84.1 with Python tools), significantly outperforming Claude Opus 4.6 Max at 57.7 (83.1 with Python) and GPT-5.4 Xhigh at 39.0 (85.4 with Python).

Meta has framed three scaling axes: pretraining, reinforcement learning, and test-time reasoning. These axes allow for predictable and measurable improvements in model capabilities. Over the past nine months, Meta has rebuilt its pretraining stack, resulting in substantial efficiency gains, enabling the same capabilities with much less compute than before.

Reinforcement learning is applied to enhance the model's capabilities based on outcome-based feedback, while test-time reasoning allows Muse Spark to 'think' before responding, leading to a phenomenon called thought compression. After an initial period of improvement, thought compression enables the model to solve problems using significantly fewer tokens.

Another interesting feature is the Contemplating mode, allowing multiple agents to work in parallel, generating solutions that are refined and aggregated into a final output. This gives Muse Spark the ability to demonstrate superior performance with comparable latency.

On health benchmarks, Muse Spark achieves notable results, scoring 42.8 on HealthBench Hard compared to 14.8 for Claude Opus 4.6 Max. To enhance its health reasoning capabilities, Meta collaborated with over 1,000 physicians to curate training data that facilitates more accurate and comprehensive responses.

Optimizing Long Context LLM Inference with NVIDIA KVPress

Google AI Research Introduces PaperOrchestra for Automated Paper Writing

Related articles

Users report performance degradation of Anthropic's Claude models

Users report performance degradation of Claude models by Anthropic, sparking discussions about product quality.

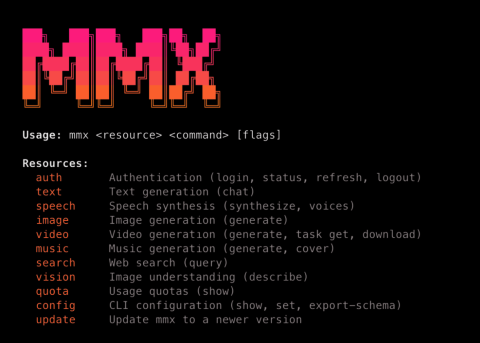

MiniMax Launches MMX-CLI: A Command-Line Interface for AI Agents

MiniMax has launched MMX-CLI, a new command-line interface for AI agents that simplifies access to generative capabilities.

Creating a Workflow for Microsoft VibeVoice with ASR and TTS

Exploring Microsoft VibeVoice: creating a workflow for ASR and TTS.