MiniMax M2.7 Enhances AI Workflows on NVIDIA Platforms

The release of MiniMax M2.7 brings enhancements to the popular MiniMax M2.5 model, designed for agentic tasks and other complex scenarios in fields such as reasoning, machine learning research workflows, software, engineering, and office work.

The open weights of MiniMax M2.7 are now available through NVIDIA and across the open-source ecosystem. The MiniMax M2 series is a family of sparse mixture-of-experts (MoE) models designed for efficiency and capability. The MoE design keeps inference costs low while maintaining the full capacity of a 230 billion parameter model.

This model utilizes multi-head causal self-attention enhanced with Rotary Position Embeddings (RoPE) and Query-Key Root Mean Square Normalization (QK RMSNorm) for stable training at scale. A top-k expert routing mechanism ensures that only the most relevant experts activate for any given input, keeping inference costs low despite the model’s large total parameter count.

MiniMax M2.7 features 230 billion total parameters, with 10 billion active parameters and an activation rate of 4.3%. The input context length reaches 200,000 tokens, comprising 256 local experts, of which 8 are activated per token, and consists of 62 layers.

NVIDIA also introduced NemoClaw, an open-source reference stack that simplifies the running of always-on OpenClaw assistants with a single command. It installs a secure environment for running autonomous agents with endpoints or open models like M2.7. Developers can get started today with this one-click setup to provision an environment with OpenClaw and OpenShell on NVIDIA's AI GPU platform.

To maximize performance for the MiniMax M2 series, NVIDIA collaborated with the open-source community to integrate high-performance kernels into vLLM and SGLang. These optimizations specifically target the architectural demands of large-scale MoE models, significantly improving inference performance.

Researchers from MIT, NVIDIA, and Zhejiang University Introduce TriAttention for AI Optimization

Liquid AI Releases LFM2.5-VL-450M: a Vision-Language Model with Multilingual Support

Related articles

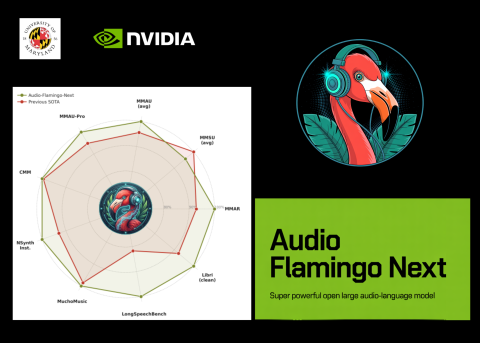

NVIDIA and University of Maryland Unveil Audio Flamingo Next

NVIDIA and University of Maryland unveiled Audio Flamingo Next, a powerful audio-language model for processing speech and sounds.

Users report performance degradation of Anthropic's Claude models

Users report performance degradation of Claude models by Anthropic, sparking discussions about product quality.

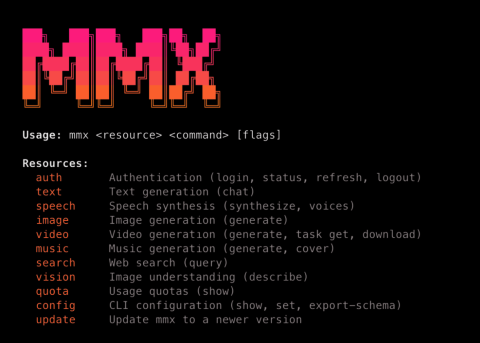

MiniMax Launches MMX-CLI: A Command-Line Interface for AI Agents

MiniMax has launched MMX-CLI, a new command-line interface for AI agents that simplifies access to generative capabilities.