Optimize AI Costs with Amazon Bedrock Projects

As organizations scale their AI workloads on Amazon Bedrock, understanding what drives spending becomes critical. Teams may need to perform chargebacks, investigate cost spikes, and guide optimization decisions, all of which require cost attribution at the workload level. With Amazon Bedrock Projects, you can attribute inference costs to specific workloads and analyze them in AWS Cost Explorer and AWS Data Exports.

A project on Amazon Bedrock is a logical boundary representing a workload, such as an application, environment, or experiment. To attribute the cost of a project, you attach resource tags and pass the project ID in your API calls. You can then activate the cost allocation tags in AWS Billing to filter, group, and analyze spend in AWS Cost Explorer and AWS Data Exports.

The tags you attach to projects become dimensions that you can filter and group by in your cost reports. It is recommended to plan these before creating your first project. A common approach is to tag by application, environment, team, and cost center.

With your tagging strategy defined, you are ready to create projects and start attributing costs. Each project has its own set of cost allocation tags that flow into your billing data. For example, you can create a project using the Projects API, installing the required dependencies and specifying tags.

Once projects are created, you can associate inference requests by passing the project ID in your API calls. This helps maintain clean cost attribution. To ensure that project tags appear in cost reports, you must activate them as cost allocation tags in AWS Billing, connecting project tags to the billing pipeline.

Startup Arcee launches new language model Trinity Large Thinking

Google launched an offline AI dictation app called Google AI Edge Eloquent

Related articles

43% of AI-generated code changes need debugging in production

43% of AI-generated code changes require debugging in production, raising concerns about the efficacy of AI in development.

Spring AI SDK for Amazon Bedrock AgentCore is now available

Amazon Bedrock AgentCore introduces a new SDK for building AI agents.

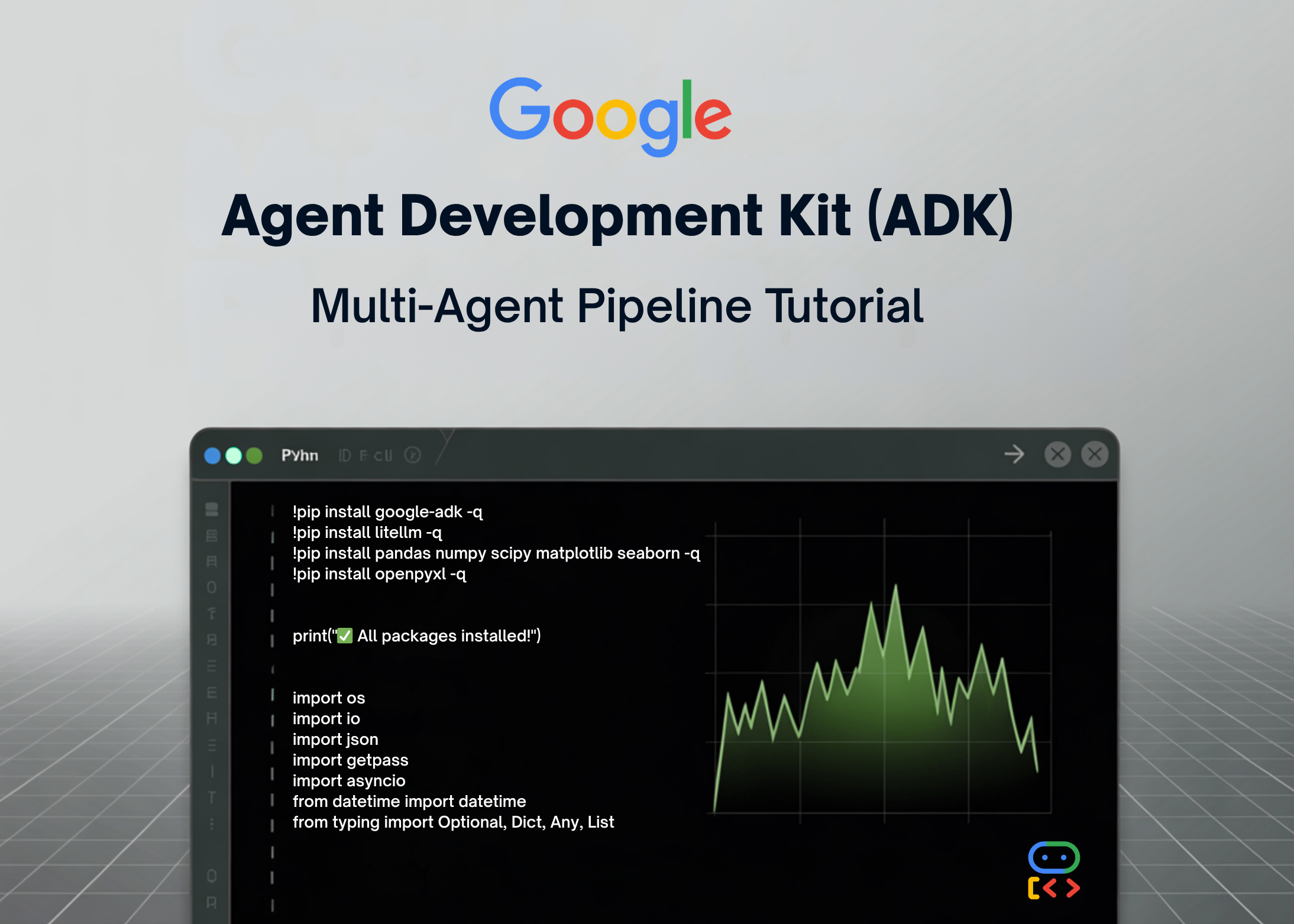

Building a Multi-Agent Data Analysis System with Google ADK

Creating a multi-agent data analysis system using Google ADK.