Qwen Team Introduces Qwen3.6-35B-A3B: A New Open AI Model

The open-source AI landscape has a new entry worth noting. The Qwen team at Alibaba has released Qwen3.6-35B-A3B, the first open-weight model from the Qwen3.6 generation, demonstrating that parameter efficiency is more critical than mere model size. With a total of 35 billion parameters but only 3 billion activated during inference, this model achieves performance comparable to dense models that are ten times its active size.

What is a Sparse MoE model and why does it matter? A Mixture of Experts (MoE) model does not utilize all its parameters on every forward pass. Instead, it routes each input token through a small subset of specialized sub-networks called 'experts.' The remaining parameters remain idle, allowing for a large total parameter count while keeping inference costs proportional only to the active parameter count.

Qwen3.6-35B-A3B is a causal language model with a vision encoder, trained through both pre-training and post-training stages. Its MoE layer contains 256 experts, with 8 routed experts and 1 shared expert activated per token. The architecture features an unusual hidden layout, comprising 10 blocks, each consisting of 3 instances of (Gated DeltaNet → MoE) followed by 1 instance of (Gated Attention → MoE). Across 40 layers, the Gated DeltaNet sublayers manage linear attention, a computationally cheaper alternative to standard self-attention.

The model supports a native context length of 262,144 tokens, extendable up to 1,010,000 tokens using YaRN (Yet another RoPE extensioN). A significant aspect is Agentic Coding, which shows serious results. On SWE-bench Verified, the canonical benchmark for real-world problem-solving, Qwen3.6-35B-A3B scores 73.4, surpassing Qwen3.5-35B-A3B (70.0) and Gemma4-31B (52.0).

The model also excels in multimodal understanding. It can handle images, documents, videos, and spatial reasoning tasks. On MMMU (Massive Multi-discipline Multimodal Understanding), Qwen3.6-35B-A3B scores 81.7, outperforming Claude-Sonnet-4.5 (79.6) and Gemma4-31B (80.4). On RealWorldQA, testing visual understanding in real-world photographic contexts, it achieves 85.3, significantly ahead of Qwen3.5-27B (83.7) and Claude-Sonnet-4.5 (70.3).

A key feature is the model's control over reasoning behavior. Qwen3.6 operates in thinking mode by default, generating reasoning content enclosed within

Related articles

OpenAI unveils GPT-Rosalind to accelerate life sciences research

OpenAI has introduced GPT-Rosalind, a model to accelerate life sciences research.

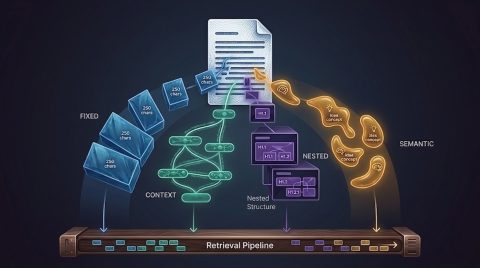

Error in RAG: How Incorrect Data Chunking Affects Outcomes

Incorrect data chunking can lead to system errors, reducing user trust.

Google launches new AI Mode for side-by-side web searching

Google has introduced a new AI Mode for side-by-side web searching.