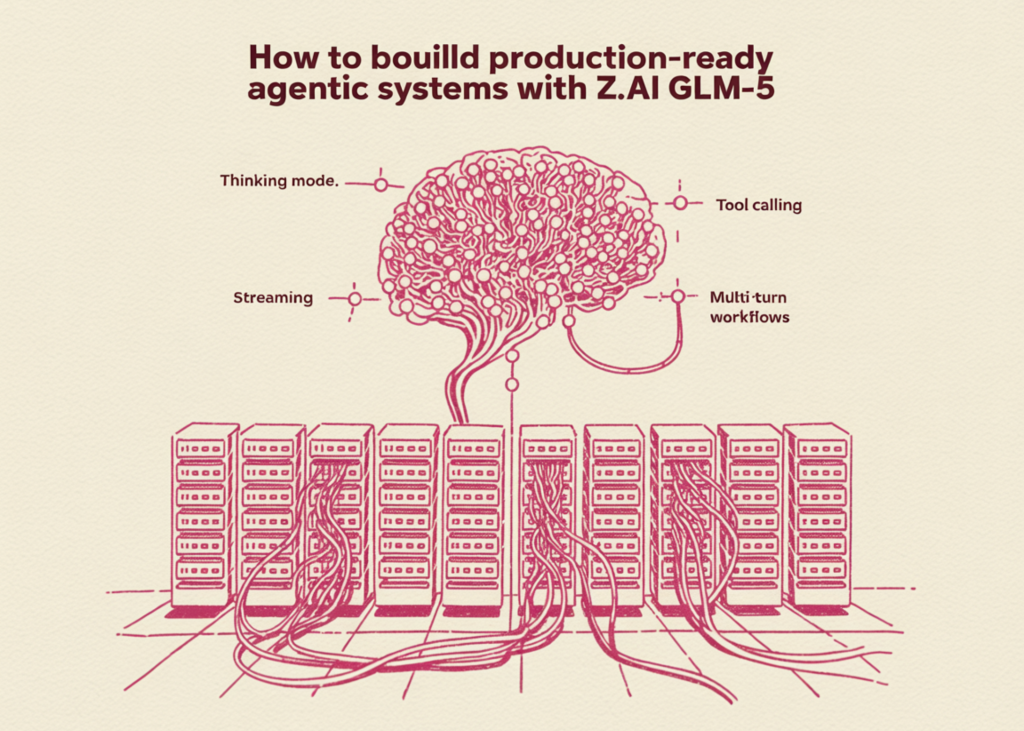

Build Production-Ready Agentic Systems with Z.AI GLM-5

In this tutorial, we explore the full capabilities of Z.AI’s GLM-5 model and build a complete understanding of how to use it for real-world agentic applications. We start from the fundamentals by setting up the environment using the Z.AI SDK and its OpenAI-compatible interface, and then progressively move on to advanced features such as streaming responses, thinking mode for deeper reasoning, and multi-turn conversations.

As we continue, we integrate function calling, structured outputs, and eventually construct a fully functional multi-tool agent powered by GLM-5. We also understand each capability in isolation, and how Z.AI’s ecosystem enables us to build scalable, production-ready AI systems.

We begin by installing the Z.AI and OpenAI SDKs, then securely capture our API key through hidden terminal input using getpass. We initialize the ZaiClient and fire off our first basic chat completion to GLM-5, asking it to explain the Mixture-of-Experts architecture. We then explore streaming responses, watching tokens arrive in real time as GLM-5 generates a Python one-liner for prime checking.

By activating GLM-5’s thinking mode, we can observe its internal reasoning before giving a final answer. This is especially powerful for math, logic, and complex coding tasks. We enable thinking mode and watch its internal reasoning streamed live through the reasoning_content field before the final answer appears.

Next, we build a multi-turn conversation where we ask about Python lists vs tuples, follow up on NamedTuples, and request a practical example with type hints, all while GLM-5 maintains full context across turns. We track how the conversation grows in message count and token usage with each successive exchange.

Google DeepMind Develops LLM to Automate Game Theory Algorithms

Netflix Launches VOID: AI Model for Object Removal in Videos

Related articles

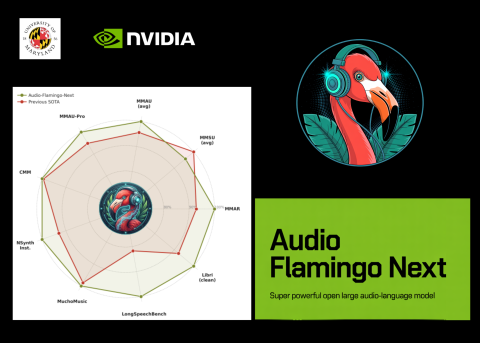

NVIDIA and University of Maryland Unveil Audio Flamingo Next

NVIDIA and University of Maryland unveiled Audio Flamingo Next, a powerful audio-language model for processing speech and sounds.

Users report performance degradation of Anthropic's Claude models

Users report performance degradation of Claude models by Anthropic, sparking discussions about product quality.

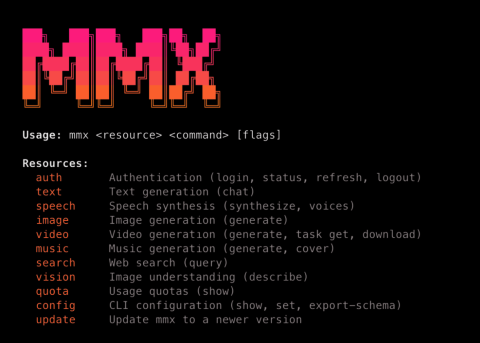

MiniMax Launches MMX-CLI: A Command-Line Interface for AI Agents

MiniMax has launched MMX-CLI, a new command-line interface for AI agents that simplifies access to generative capabilities.