Netflix Launches VOID: AI Model for Object Removal in Videos

Video editing has always had a dirty secret: removing an object from footage is easy; making the scene look like it was never there is brutally hard. Take out a person holding a guitar, and you’re left with a floating instrument that defies gravity. Hollywood VFX teams spend weeks fixing exactly this kind of problem. A team of researchers from Netflix and INSAIT, Sofia University ‘St. Kliment Ohridski,’ has released the VOID (Video Object and Interaction Deletion) model that can do it automatically.

VOID removes objects from videos along with all interactions they induce on the scene — not just secondary effects like shadows and reflections, but physical interactions like objects falling when a person is removed. Standard video inpainting models — the kind used in most editing workflows today — are trained to fill in the pixel region where an object was. They’re essentially very sophisticated background painters. What they don’t do is reason about causality: if I remove an actor who is holding a prop, what should happen to that prop?

Existing video object removal methods excel at inpainting content ‘behind’ the object and correcting appearance-level artifacts such as shadows and reflections. However, when the removed object has more significant interactions, such as collisions with other objects, current models fail to correct them and produce implausible results. VOID is built on top of CogVideoX and fine-tuned for video inpainting with interaction-aware mask conditioning. The key innovation is in how the model understands the scene — not just ‘what pixels should I fill?’ but ‘what is physically plausible after this object disappears?’

The canonical example from the research paper: if a person holding a guitar is removed, VOID also removes the person’s effect on the guitar — causing it to fall naturally. That’s not trivial. The model has to understand that the guitar was being supported by the person, and that removing the person means gravity takes over. And unlike prior work, VOID was evaluated head-to-head against real competitors. Experiments on both synthetic and real data show that the approach better preserves consistent scene dynamics after object removal compared to prior video object removal methods including ProPainter, DiffuEraser, Runway, MiniMax-Remover, ROSE, and Gen-Omnimatte.

VOID is built on CogVideoX-Fun-V1.5-5b-InP — a model from Alibaba PAI — and fine-tuned for video inpainting with interaction-aware quadmask conditioning. CogVideoX is a 3D Transformer-based video generation model. Think of it like a video version of Stable Diffusion — a diffusion model that operates over temporal sequences of frames rather than single images. The specific base model (CogVideoX-Fun-V1.5-5b-InP) is released by Alibaba PAI on Hugging Face, which is the checkpoint engineers will need to download separately before running VOID.

VOID uses two transformer checkpoints, trained sequentially. You can run inference with Pass 1 alone or chain both passes for higher temporal consistency. Pass 1 is the base inpainting model and is sufficient for most videos. Pass 2 serves a specific purpose: correcting a known failure mode. If the model detects object morphing — a known failure mode of smaller video diffusion models — an optional second pass re-runs inference using flow-warped noise derived from the first pass, stabilizing object shape along the newly synthesized trajectories.

Build Production-Ready Agentic Systems with Z.AI GLM-5

NVIDIA Highlights Robotics Breakthroughs During National Robotics Week

Related articles

Adobe launches Firefly AI Assistant to streamline Creative Cloud workflows

Adobe launches Firefly AI Assistant to streamline workflows in Creative Cloud.

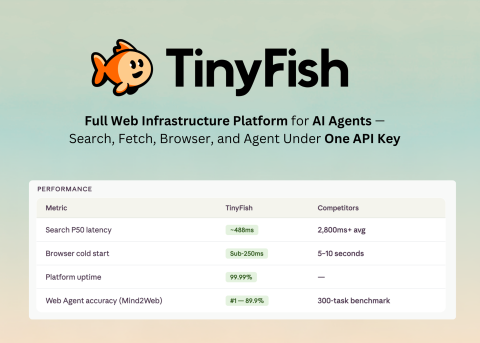

TinyFish AI Launches Unified Platform for AI Agents with One API Key

TinyFish AI has launched a platform for AI agents with four tools and a unified API.

Building Data Centers in Space for AI

Companies are building data centers in space, but the reality remains unclear.