Boost Data Center Performance with New Software Solution

To enhance data center efficiency, multiple storage devices are often pooled together over a network to allow many applications to share them. However, even with pooling, significant device capacity remains underutilized due to performance variability across devices. MIT researchers have developed a system that boosts the performance of storage devices by simultaneously addressing three major sources of variability. Their approach delivers significant speed improvements over traditional methods that tackle only one source of variability at a time.

The system employs a two-tier architecture, with a central controller making overarching decisions about which tasks each storage device performs, and local controllers for each machine that quickly reroute data if that device is struggling. This method, which can adapt in real-time to shifting workloads, does not require specialized hardware. When tested on realistic tasks such as AI model training and image compression, the system nearly doubled the performance delivered by traditional approaches. By intelligently balancing the workloads of multiple storage devices, the system can enhance overall data center efficiency.

Gohar Chaudhry, an electrical engineering and computer science graduate student at MIT and lead author of a paper on this technique, stated, “There is a tendency to want to throw more resources at a problem to solve it, but that is not sustainable in many ways. We want to maximize the longevity of these very expensive and carbon-intensive resources.” With our adaptive software solution, you can still extract a lot of performance from your existing devices before needing to discard them and buy new ones.

Chaudhry is joined on the paper by Ankit Bhardwaj, an assistant professor at Tufts University; Zhenyuan Ruan, a PhD candidate; and senior author Adam Belay, an associate professor of EECS and a member of the MIT Computer Science and Artificial Intelligence Laboratory. The research will be presented at the USENIX Symposium on Networked Systems Design and Implementation.

To tap into untapped SSD performance, the researchers developed Sandook, a software-based system that addresses three major forms of performance-hampering variability simultaneously. The name “Sandook” means “box” in Urdu, signifying “storage.” One type of variability arises from differences in the age, wear, and capacity of SSDs purchased at different times from multiple vendors. Another type is due to mismatches between read and write operations occurring on the same SSD. The third source of variability is garbage collection, a process that slows SSD operations and is triggered at random intervals that a data center operator cannot control.

To manage all three sources of variability, Sandook utilizes a two-tier structure. A global scheduler optimizes task distribution for the overall pool, while faster schedulers on each SSD react to urgent events and shift operations away from congested devices. The system overcomes delays from read-write interference by rotating which SSDs an application can use for reads and writes, reducing the chance of simultaneous operations on the same machine. Sandook also profiles the typical performance of each SSD, using this information to detect when garbage collection is likely slowing operations.

During testing, Sandook was evaluated on a pool of 10 SSDs across four tasks: running a database, training a machine-learning model, compressing images, and storing user data. Sandook boosted the throughput of each application between 12 and 94 percent compared to static methods and improved overall SSD capacity utilization by 23 percent. The system enabled SSDs to achieve 95 percent of their theoretical maximum performance without the need for specialized hardware or application-specific updates.

Meta AI Introduces EUPE: A Compact Encoder for Mobile Vision Tasks

Rocket startup offers consulting-style product strategies at low cost

Related articles

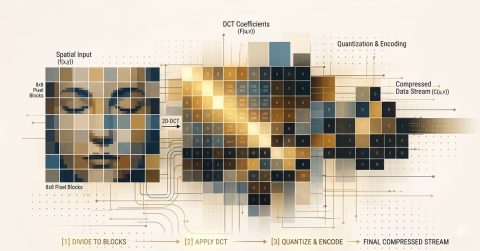

The Future of Data Compression: From Pixels to DNA

The future of data compression encompasses all types of information, from genomes to video, expanding the capabilities of digital technologies.

US-China AI performance gap closes, but responsibility gap widens

The Stanford University report indicates that while the US-China AI performance gap has closed, issues with responsibility and safety remain.

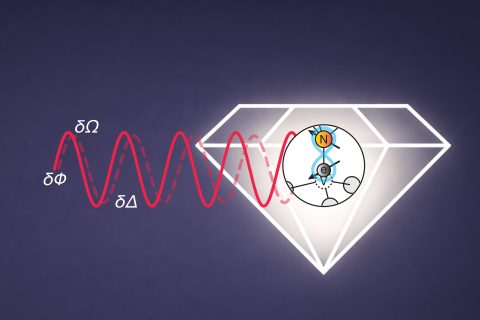

MIT develops multitasking quantum sensors for simultaneous measurements

MIT researchers have developed quantum sensors capable of measuring multiple physical quantities simultaneously.