Google DeepMind Introduces Gemini Robotics-ER 1.6 with Enhanced Reasoning

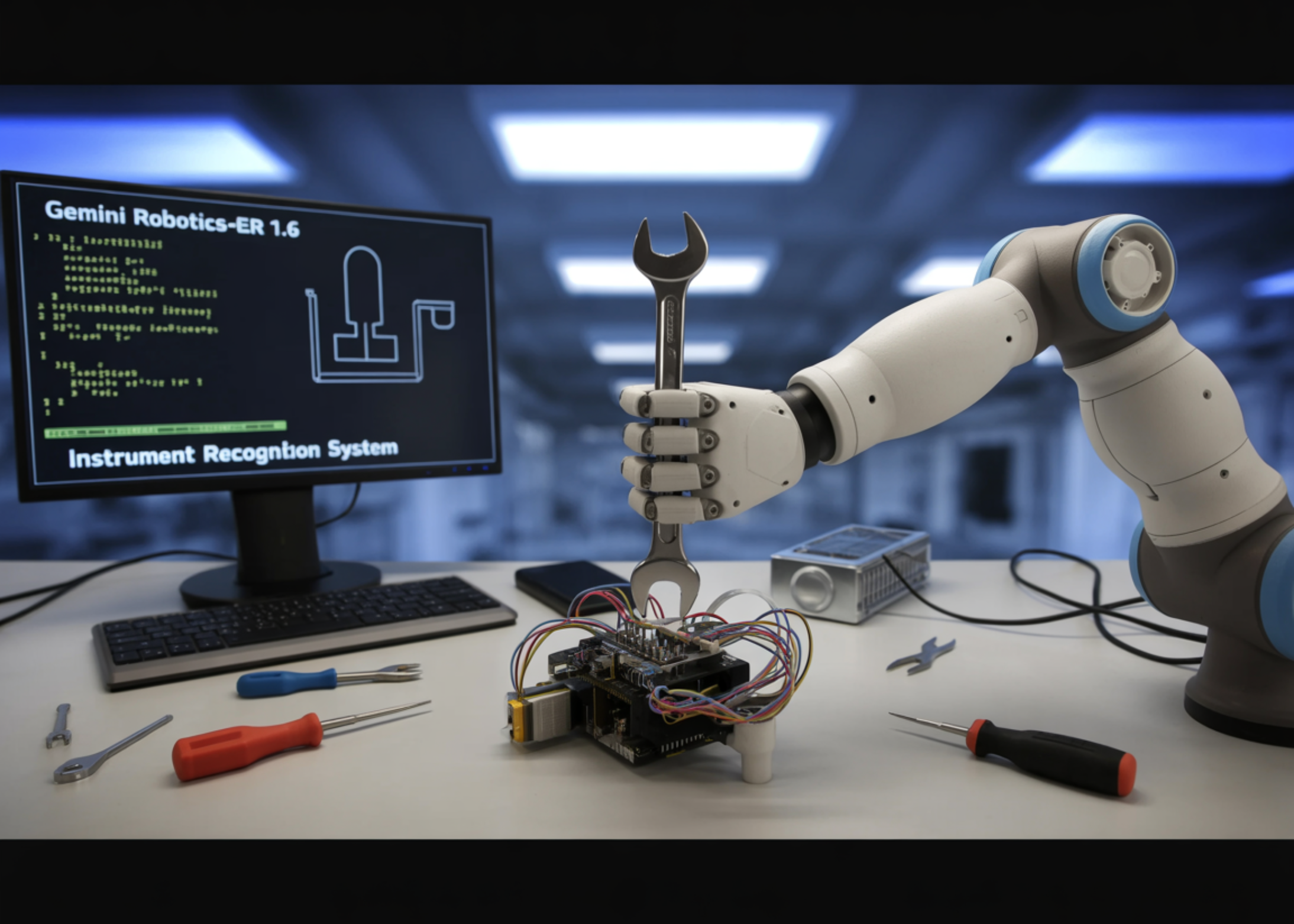

The Google DeepMind research team has unveiled Gemini Robotics-ER 1.6, a significant upgrade to its embodied reasoning model designed to serve as the 'cognitive brain' of robots operating in real-world environments. This model specializes in reasoning capabilities critical for robotics, including visual and spatial understanding, task planning, and success detection, acting as the high-level reasoning model for a robot, capable of executing tasks by natively calling tools like Google Search, vision-language-action models (VLAs), or any other third-party user-defined functions.

The key architectural idea is that Google DeepMind employs a dual-model approach to robotics AI. Gemini Robotics 1.5 is the vision-language-action (VLA) model, which processes visual inputs and user prompts and directly translates them into physical motor commands. In contrast, Gemini Robotics-ER is the embodied reasoning model: it specializes in understanding physical spaces, planning, and making logical decisions but does not directly control robotic limbs. Instead, it provides high-level insights to help the VLA model determine what to do next.

Gemini Robotics-ER 1.6 shows significant improvement over both Gemini Robotics-ER 1.5 and Gemini 3.0 Flash, particularly enhancing spatial and physical reasoning capabilities such as pointing, counting, and success detection. However, the key addition is a capability that did not exist in prior versions at all: instrument reading. Pointing — the model's ability to identify precise pixel-level locations in an image — is far more powerful than it sounds. Points can be used to express spatial reasoning, relational logic, motion reasoning, and constraint compliance.

In internal benchmarks, Gemini Robotics-ER 1.6 demonstrates a clear advantage over its predecessor. The model correctly identifies the number of hammers, scissors, paintbrushes, pliers, and garden tools in a scene and does not point to requested items that are not present in the image. In comparison, Gemini Robotics-ER 1.5 fails to identify the correct number of hammers or paintbrushes, misses scissors altogether, and hallucinates a wheelbarrow.

Success detection and multi-view reasoning in robotics are also crucial. Knowing when a task is finished is just as important as knowing how to start it. Success detection serves as a critical decision-making engine that allows an agent to intelligently choose between retrying a failed attempt or progressing to the next stage of a plan. Gemini Robotics-ER 1.6 advances multi-view reasoning, enabling it to better fuse information from multiple camera streams, even in occluded or dynamically changing environments.

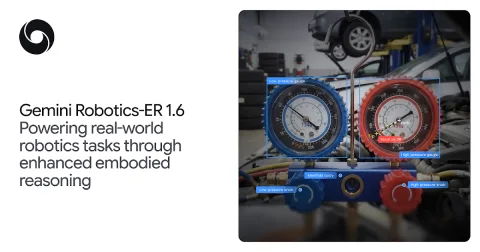

The genuinely new capability in Gemini Robotics-ER 1.6 is instrument reading — the ability to interpret analog gauges, pressure meters, sight glasses, and digital readouts in industrial settings. This task stems from facility inspection needs, a critical focus area for Boston Dynamics. The Spot robot from Boston Dynamics can visit instruments throughout a facility and capture images of them for Gemini Robotics-ER 1.6 to interpret. Instrument reading requires complex visual reasoning, as one must precisely perceive a variety of inputs and understand how they all relate to each other.

US-China AI performance gap closes, but responsibility gap widens

The Future of Data Compression: From Pixels to DNA

Related articles

Drones become smarter for large agricultural holdings

GEODASH Aerosystems develops smart drones for agriculture.

Max Hodak prepares for first human trials of brain-computer interface

Science Corporation is gearing up for the first human trials of its brain-computer interface.

Gemini Robotics-ER 1.6 enhances robotic perception capabilities

Gemini Robotics-ER 1.6 enhances robots' reasoning abilities about the physical world.