Explore DenseNet Architecture and Its Implementation in PyTorch

When training a very deep neural network, one issue that may arise is the vanishing gradient problem. This issue occurs when the weight update of a model during training slows down or even stops, preventing the model from improving. In very deep networks, the gradient computation during backpropagation involves multiplying many derivative terms together, which can lead to extremely small values. If the gradient is very small, the weight update will be slow, causing the training to take longer.

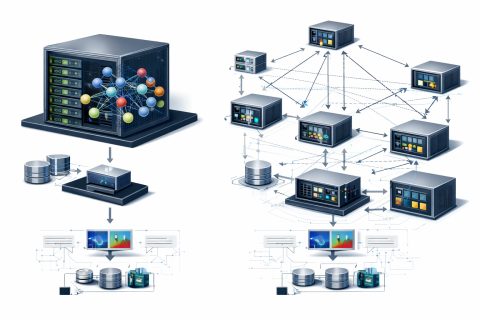

To address this vanishing gradient problem, shortcut paths can be used to allow gradients to flow more easily through a deep network. One of the most popular architectures that attempts to solve this is ResNet, which implements skip connections that jump over several layers. This idea is further enhanced in DenseNet, where the skip connections are implemented even more aggressively, making it better than ResNet at handling the vanishing gradient problem.

The DenseNet architecture was originally proposed in a paper titled “Densely Connected Convolutional Networks” in 2016. The main idea is indeed to address the vanishing gradient problem. DenseNet performs better than ResNet because of the shortcut paths branching out from a single layer to all other subsequent layers, allowing information to flow seamlessly between layers.

Unlike standard CNNs, where the number of connections corresponds to the number of layers, DenseNet has significantly more connections. For example, a 5-layer DenseNet would have 15 connections, enabling more efficient use of information from previous layers. Instead of performing element-wise summation as in ResNet, DenseNet combines information through channel-wise concatenation, leading to an increase in the number of feature maps as the network deepens.

Despite having such complex connections, DenseNet is actually much more efficient compared to traditional CNNs in terms of the number of parameters. For instance, a DenseNet with 4 convolutional layers has a total of 1728 parameters, while a similar structure in a traditional CNN would exceed 7632 parameters. This clearly shows that DenseNet is indeed much lighter than traditional CNNs due to its feature reuse mechanism.

Replaced Vector DBs with Google's Memory Agent for Obsidian Notes

AI Companies Build Gas Plants for Data Centers Amid Concerns

Related articles

Meta Researchers Introduce Hyperagents for Self-Improving AI

Meta researchers have introduced hyperagents that enhance AI for non-coding tasks.

OpenAI updates its Agents SDK to help enterprises build safer solutions

OpenAI has updated its Agents SDK, adding new features for businesses.

Optimizing GPU Usage for Language Models and Reducing Costs

Optimizing GPU usage for language models reduces costs and increases efficiency.