Unlock Insights from Video with Amazon Bedrock Models

Video content is now ubiquitous, spanning security surveillance, media production, social platforms, and enterprise communications. However, extracting meaningful insights from large volumes of video remains a significant challenge. Organizations require solutions that can comprehend not only what is depicted in a video but also the context, narrative, and underlying meaning of the content. This article explores how Amazon Bedrock's multimodal models enable scalable video understanding through three distinct architectural approaches. Each approach is tailored for different use cases and cost-performance trade-offs.

Traditional video analysis methods rely on manual review or basic computer vision techniques that detect predefined patterns. While functional, these methods face considerable limitations: time-consuming manual reviews, limited flexibility, context blindness, and integration complexities with modern applications. The advent of multimodal foundation models on Amazon Bedrock shifts this paradigm, allowing models to process both visual and textual information simultaneously. This capability enables them to understand scenes, generate natural language descriptions, answer questions about video content, and detect nuanced events that would be challenging to define programmatically.

Understanding video content is inherently complex, combining visual, auditory, and temporal information that must be analyzed together for meaningful insights. Different use cases, such as media scene analysis, ad break detection, IP camera tracking, or social media moderation, require distinct workflows with varying cost, accuracy, and latency trade-offs. This solution provides three distinct workflows, each utilizing different video extraction methods optimized for specific scenarios.

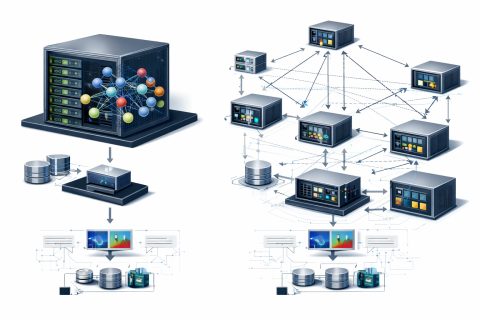

The frame-based approach samples image frames at fixed intervals, removes similar or redundant frames, and applies image understanding foundation models to extract visual information at the frame level. Audio transcription is performed separately using Amazon Transcribe. This workflow is ideal for security and surveillance, quality assurance, and compliance monitoring. The architecture employs AWS Step Functions to orchestrate the entire pipeline, including intelligent frame deduplication, which significantly reduces processing costs by eliminating redundant frames.

The shot-based workflow segments video into short clips (shots) and applies video understanding foundation models to each segment. This approach captures temporal context within each shot while maintaining flexibility to process longer videos. By generating both semantic labels and embeddings for each shot, this method enables efficient video search and retrieval while balancing accuracy and flexibility. The architecture groups shots into batches for parallel processing in subsequent steps, enhancing throughput and managing AWS Lambda concurrency limits.

Thus, Amazon Bedrock's multimodal models represent a powerful tool for addressing video analysis tasks, allowing organizations to extract valuable insights and automate content analysis processes.

NVIDIA Advances Autonomous Networks with Agentic AI and Reasoning Models

Build Reliable AI Agents with Amazon Bedrock

Related articles

Meta Researchers Introduce Hyperagents for Self-Improving AI

Meta researchers have introduced hyperagents that enhance AI for non-coding tasks.

OpenAI updates its Agents SDK to help enterprises build safer solutions

OpenAI has updated its Agents SDK, adding new features for businesses.

Optimizing GPU Usage for Language Models and Reducing Costs

Optimizing GPU usage for language models reduces costs and increases efficiency.