Build Reliable AI Agents with Amazon Bedrock

Amazon Bedrock introduces AgentCore Evaluations, a fully managed service for assessing AI agent performance across the entire development lifecycle. In this post, we discuss how the service measures agent accuracy across multiple quality dimensions. We explain the two evaluation approaches for development and production, and share practical guidance for building agents that can be confidently deployed.

With Amazon Bedrock AgentCore Evaluations, developers can reliably assess the effectiveness of their AI agents, allowing for optimization and improved user interaction quality. This service becomes an essential tool for specialists aiming to create high-quality and reliable AI solutions.

We will discuss how to correctly interpret evaluation results and how to use the data obtained to enhance models. This will assist not only in developing new agents but also in refining existing solutions, ultimately leading to the creation of more effective and safe AI systems.

Unlock Insights from Video with Amazon Bedrock Models

Introducing Mamba-3: A Model Optimized for Inference Efficiency

Related articles

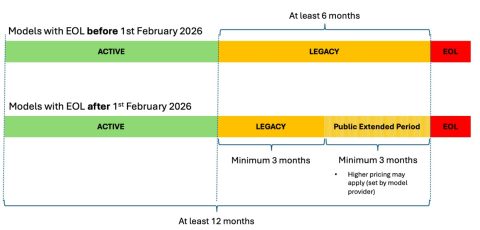

Understanding Amazon Bedrock Model Lifecycle

Learn about the Amazon Bedrock model lifecycle and migration strategies.

Reducing Checkpoint Costs with Python and NVIDIA nvCOMP

Compressing checkpoints with Python and NVIDIA nvCOMP reduces costs.

New technique speeds up AI model training while reducing size

Researchers developed the CompreSSM method, which speeds up AI training and reduces its size.