Maximize AI Infrastructure Throughput with GPU Workload Consolidation

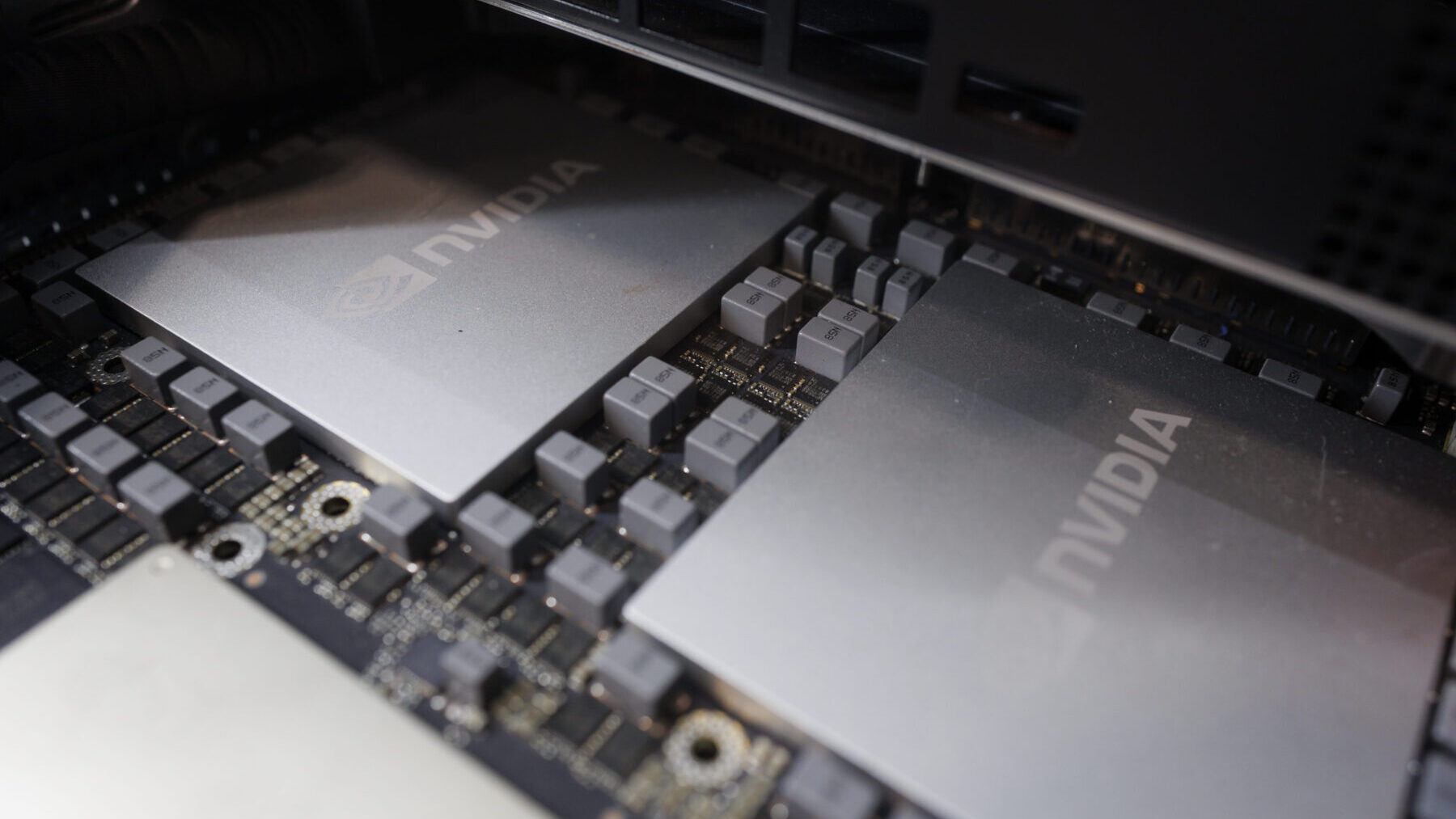

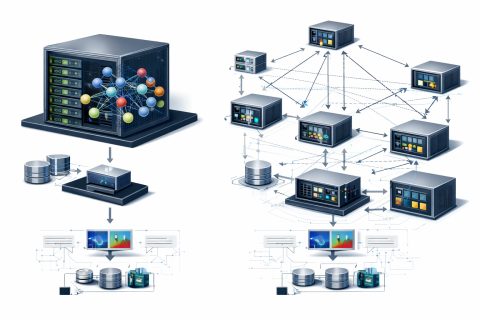

In production Kubernetes environments, the mismatch between model requirements and GPU size leads to inefficiencies. Lightweight automatic speech recognition (ASR) or text-to-speech (TTS) models may require only 10 GB of VRAM yet occupy an entire GPU in standard Kubernetes deployments. The scheduler binds a model to one or more GPUs and cannot easily share resources across models, resulting in expensive compute resources often remaining underutilized.

Addressing this issue is not just about cost reduction; it's about optimizing cluster density to serve more concurrent users on the same world-class hardware. This guide details how to implement and benchmark GPU partitioning strategies, specifically NVIDIA Multi-Instance GPU (MIG) and time-slicing, to fully utilize compute resources.

Using a production-grade voice AI pipeline as our testbed, we demonstrate how to combine models to maximize infrastructure ROI while maintaining over 99% reliability and strict latency guarantees. By default, the NVIDIA Device Plugin for Kubernetes shows GPUs as integer resources. A pod requests nvidia.com/gpu: 1, and the scheduler binds it to a physical device.

Large language models (LLMs) like NVIDIA Nemotron, Llama 3, or Qwen 7B/8B require dedicated compute to maintain low time to first token and high batch throughput. However, support models in a generative AI pipeline—embedding models, ASR, TTS, or guardrails—often use only a fraction of a card. Running these lightweight models on dedicated GPUs leads to low utilization and cluster bloat, necessitating more nodes to run the same number of pods.

To solve this, we must break the 1:1 relationship between pods and GPUs. We evaluated two primary strategies for GPU partitioning supported by the NVIDIA GPU Operator: software-based partitioning and time-slicing. Time-slicing allows multiple NVIDIA CUDA processes to share a GPU by interleaving execution, functioning similarly to a CPU scheduler.

In addition to time-slicing, NVIDIA Multi-Process Service (MPS) offers an alternative software-based approach that enables multiple processes to share GPU resources concurrently. However, in production, both methods share a single execution context, limiting isolation. MIG physically partitions the GPU into separate instances, each with its own dedicated memory and cache, ensuring strict quality of service.

Consolidating support models like ASR and TTS provides a strategic path to maximize hardware utilization while maintaining end-to-end responsiveness. Our hypothesis is that consolidating ASR and TTS workloads on a single GPU preserves latency and jitter while freeing compute for additional LLM instances.

Accelerate Token Production in AI Factories with NVIDIA Mission Control

Design Protein Binders with NVIDIA's Proteina-Complexa

Related articles

Meta Researchers Introduce Hyperagents for Self-Improving AI

Meta researchers have introduced hyperagents that enhance AI for non-coding tasks.

OpenAI updates its Agents SDK to help enterprises build safer solutions

OpenAI has updated its Agents SDK, adding new features for businesses.

Optimizing GPU Usage for Language Models and Reducing Costs

Optimizing GPU usage for language models reduces costs and increases efficiency.