Accelerate Token Production in AI Factories with NVIDIA Mission Control

In today's AI factory environment, performance is not a theoretical concept but an economic, competitive, and existential factor. A 1% drop in usable GPU time can result in millions of tokens lost per hour. Minutes of congestion can cascade into hours of recovery, while rack-level power oversubscription can lead to stranded power and reduced tokens per watt, silently eroding factory output at scale. As AI factories scale to thousands of GPUs running diverse mission-critical workloads, the cost of unpredictable congestion, power constraints, long-tail latency, and limited visibility grows exponentially. Operations teams and administrators need more than dashboards; they require flexibility and foresight.

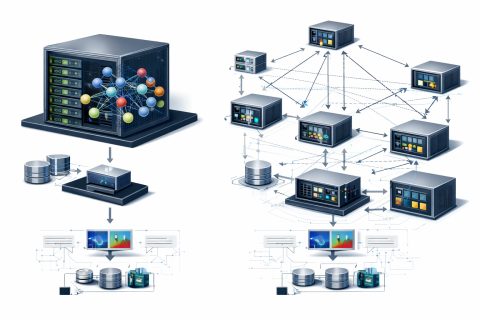

NVIDIA launched NVIDIA Mission Control as an integrated software stack for AI factories built on NVIDIA reference architectures, codifying NVIDIA best practices with a unified control plane. Mission Control version 3.0 expands further, introducing architectural flexibility, multi-org isolation, intelligent power orchestration, and predictive AIOps to detect anomalies in operations and maximize token production. Mission Control 3.0 provides newfound agility by introducing a new layered, API-driven architecture built on modular services, improving the previously tightly coupled stacks that required synchronized releases and complex validation across hardware platforms.

New components such as automated network management and domain power service, which provides a new management plane for power optimizations, further extend the Mission Control stack by bringing additional modular services into the singular control plane. By combining open components with a modular design, this enables rapid support for the latest NVIDIA hardware while allowing OEM system providers and independent software vendors (ISVs) to integrate Mission Control capabilities directly into their own ecosystems. This creates an outcome where enterprises now have more flexibility and choice in their own software stacks, making it easier to customize solutions to meet their unique business and technology challenges.

One technological challenge many organizations face is supporting multi-org isolation within a centralized AI factory. As AI factories evolve from research and experimentation into production-grade, mission-critical environments, shared infrastructure across multiple teams requires strong organizational isolation and secure multi-tenancy. The enhanced Mission Control control plane transforms the AI factory management stack into a software-defined, virtualized architecture. Mission Control services are decoupled from physical management nodes and deployed on Virtual Machine (KVM)-based platforms using NVIDIA-provided automation.

Power management in previous iterations of Mission Control helped organizations responsibly manage complex power considerations, but it was reactive. Jobs were scheduled first; power policies were enforced afterward. While this was a huge step for balancing power and performance, more dynamic solutions were needed to manage this at scale, especially across mixed Slurm and Kubernetes environments. This is where Mission Control evolves with version 3.0. By incorporating domain power service directly into Mission Control, power becomes a first-class scheduling primitive that helps organizations optimize token production with their power policies.

NVIDIA Sets New MLPerf Records with Co-Designed Solutions

Maximize AI Infrastructure Throughput with GPU Workload Consolidation

Related articles

Optimizing GPU Usage for Language Models and Reducing Costs

Optimizing GPU usage for language models reduces costs and increases efficiency.

Rede Mater Dei de Saúde implements AI agents for revenue optimization

Rede Mater Dei de Saúde implements AI agents for revenue optimization in healthcare.

Google launches 'Skills' in Chrome for managing AI prompts

Google launches 'Skills' in Chrome for managing AI prompts.