Revise Governance to Achieve AI Goals

DevOps used to be predictable: same input, same output, binary success, static dependencies, concrete metrics. You could control what you could predict, measure what was concrete, and secure what followed known patterns. Then agentic AI arrived, and everything changed. Agents operate non-deterministically; they don’t follow fixed patterns. Ask the same question twice, get different answers. They select different tools and approaches as they work, rather than following predetermined workflows. Quality exists on a gradient from perfect to fabricated rather than binary pass-fail. Predictable dependencies and processes have given way to autonomous systems that adapt, reason, and act independently.

Traditional IT governance frameworks designed for static deployments can’t address these complex multi-system interactions. Organizations face inconsistent security postures across agentic workflows, compliance gaps that vary by deployment, and observability metrics opaque to business stakeholders without deep technical expertise. This shift requires rethinking security, operations, and governance as interdependent dimensions of agentic system health. It’s also the origin story of AI Risk Intelligence (AIRI): the enterprise-grade automated governance solution from AWS Generative AI Innovation Center that automates security, operations, and governance controls’ assessments into a single viewpoint spanning the entire agentic lifecycle.

To build this solution, we used the AWS Responsible AI Best Practices Framework, our science-backed guidance built on our experience with hundreds of thousands of AI workloads, helping customers address responsible AI considerations throughout the AI lifecycle and make informed design decisions that accelerate deployment of trusted AI systems. Consider a common security risk in agentic systems. The Open Worldwide Application Security Project (OWASP)—a nonprofit that tracks cybersecurity vulnerabilities—identifies “Tool Misuse and Exploitation” as one of its Top 10 for Agentic Applications in 2026.

Imagine an enterprise AI assistant with legitimate access to email, calendar, and CRM. A bad actor embeds malicious instructions in an email. The user requests an innocent summary, but the compromised agent follows hidden directives—searching sensitive data and exfiltrating it via calendar invites—while providing a benign response that masks the breach. This unintended access operates entirely within granted permissions: the AI assistant is authorized to read emails, search data, and create calendar events. Standard data loss prevention tools and network traffic monitoring are not designed to evaluate whether an agent’s actions are aligned with its intended scope — they flag anomalies in data movement and network traffic, neither of which this unintended access produces.

To govern multi-agent systems at scale, security must integrate directly into how agents operate, and vice versa. The systemic nature of Agentic Risk reveals a critical insight: in agentic systems, security vulnerabilities cascade across multiple operational dimensions simultaneously. When the AI assistant misuses its calendar tool, the breach cascades across multiple dimensions: Multi-agent coordination: One agent’s action triggered other agents to amplify the violation Permission management: Access controls weren’t continuously validated while the agent was running Human oversight: There was no checkpoint requiring human confirmation before the agent executed a high-risk action—the system operated autonomously through the entire exploit sequence without surfacing the decision for review. Visibility: Risk managers couldn’t interpret the monitoring data to detect the problem before data was stolen.

Traditional approaches that treat security, operations, and governance as separate concerns create blind spots precisely where agents coordinate, share context, and propagate decisions. AIRI operationalizes frameworks like the NIST AI Risk Management Framework, ISO and OWASP — transforming them from static reference documents that require human interpretation into automated, continuous evaluations embedded across the entire agentic lifecycle, from design through post-production. Critically, AIRI is framework-agnostic: it calibrates against governance standards, which means the same engine that evaluates OWASP security controls also assesses organizational transparency policies or industry-specific compliance requirements. This is what makes it applicable across diverse agent architectures, industries, and risk profiles — rather than hardcoding rules for known threats, AIRI reasons over evidence the way an auditor would, but continuously and at scale.

Let us now explore how AIRI operationalizes the automated governance of agentic systems in practice. Let’s return to our AI assistant’s example. Assume, for instance, that the development team has just produced a POC using this AI assistant. Before they deploy their solution to production, they run AIRI. To assess the foundations of their system, the team starts by leveraging AIRI’s automated technical documentation review capability to automatically collect evidence of the control implementations contained in the table below — assessing not only security but also operational quality controls: transparency, controllability, explainability, safety, and robustness. The analysis spans the design of the use case, the infrastructure serving it, and organizational policies to facilitate alignment with enterprise governance and compliance requirements.

Accelerate Software Delivery with Agentic QA Automation Using Amazon Nova Act

Ring Optimizes Global Customer Support with Amazon Bedrock

Related articles

Adobe launches Firefly AI Assistant to streamline Creative Cloud workflows

Adobe launches Firefly AI Assistant to streamline workflows in Creative Cloud.

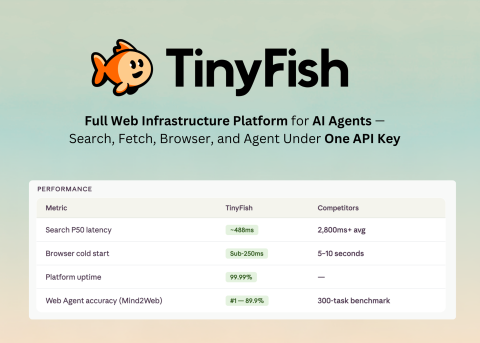

TinyFish AI Launches Unified Platform for AI Agents with One API Key

TinyFish AI has launched a platform for AI agents with four tools and a unified API.

Building Data Centers in Space for AI

Companies are building data centers in space, but the reality remains unclear.