Building Document Intelligence Pipelines with LangExtract and OpenAI

This tutorial explores how to use Google's LangExtract library to transform unstructured text into structured, machine-readable information. We begin by installing the required dependencies and securely configuring our OpenAI API key to leverage powerful language models for extraction tasks. We will also build a reusable extraction pipeline that enables us to process a range of document types, including contracts, meeting notes, product announcements, and operational logs.

Through carefully designed prompts and example annotations, we demonstrate how LangExtract can identify entities, actions, deadlines, risks, and other structured attributes while grounding them to their exact source spans. We also visualize the extracted information and organize it into tabular datasets, enabling downstream analytics, automation workflows, and decision-making systems.

We install the required libraries, including LangExtract, Pandas, and IPython, so that our Colab environment is ready for structured extraction tasks. We securely request the OpenAI API key from the user and store it as an environment variable for safe access during runtime. We then import the core libraries needed to run LangExtract, display results, and handle structured outputs.

We define the core utility functions that power the entire extraction pipeline. We create a reusable run_extraction function that sends text to the LangExtract engine and generates both JSONL and HTML outputs. We also define helper functions to convert the extraction results into tabular rows and preview them interactively in the notebook.

For extracting information from contracts, we use specific prompts that set rules for extracting risk information. We create example data that helps LangExtract understand how to extract the necessary classes of information, such as parties, obligations, deadlines, and payment terms.

Optimizing Long Context LLM Inference with NVIDIA KVPress

Google AI Research Introduces PaperOrchestra for Automated Paper Writing

Related articles

Qwen Team Introduces Qwen3.6-35B-A3B: A New Open AI Model

The Qwen team has introduced a new AI model, Qwen3.6-35B-A3B, with innovative capabilities.

OpenAI unveils GPT-Rosalind to accelerate life sciences research

OpenAI has introduced GPT-Rosalind, a model to accelerate life sciences research.

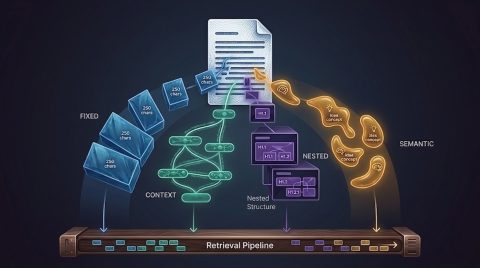

Error in RAG: How Incorrect Data Chunking Affects Outcomes

Incorrect data chunking can lead to system errors, reducing user trust.