Creating a Context Layer to Enhance LLM System Performance

This article discusses the creation of a context layer necessary for the effective operation of LLM systems. Many AI tutorials stop at the retrieval or prompting stage, but real systems require an additional level that manages memory, compression, re-ranking, and token limits.

RAG (Retrieval-Augmented Generation) systems encounter issues when the context exceeds a few turns. The main problem lies not in retrieval but in what actually enters the context window. A context layer controls what information, how much, and in what order flows into the system.

The author shares their experience of building a RAG system that worked flawlessly until conversation history was added. At that point, failures began: relevant documents were lost, and the model started forgetting information. These issues arise not from retrieval failure but from a lack of control over what enters the context window.

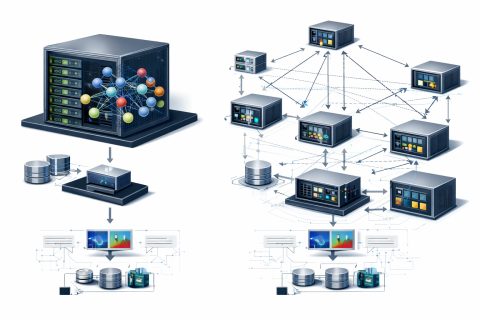

To address these problems, it is essential to introduce an architectural step between information retrieval and prompt creation. This step involves making decisions about what the model actually sees, how much information it receives, and in what order. This is referred to as context engineering.

The architecture described in the article is beneficial for developing multi-turn chatbots, RAG systems with large knowledge bases, and AI agents that need to maintain coherence. However, for single-turn queries with a small knowledge base, such architecture may be excessive.

Max Hodak prepares for first human trials of brain-computer interface

AWS Introduces Path-to-Value Framework for Generative AI Adoption

Related articles

Meta Researchers Introduce Hyperagents for Self-Improving AI

Meta researchers have introduced hyperagents that enhance AI for non-coding tasks.

OpenAI updates its Agents SDK to help enterprises build safer solutions

OpenAI has updated its Agents SDK, adding new features for businesses.

Optimizing GPU Usage for Language Models and Reducing Costs

Optimizing GPU usage for language models reduces costs and increases efficiency.