How Visual-Language-Action (VLA) Models Work for Robots

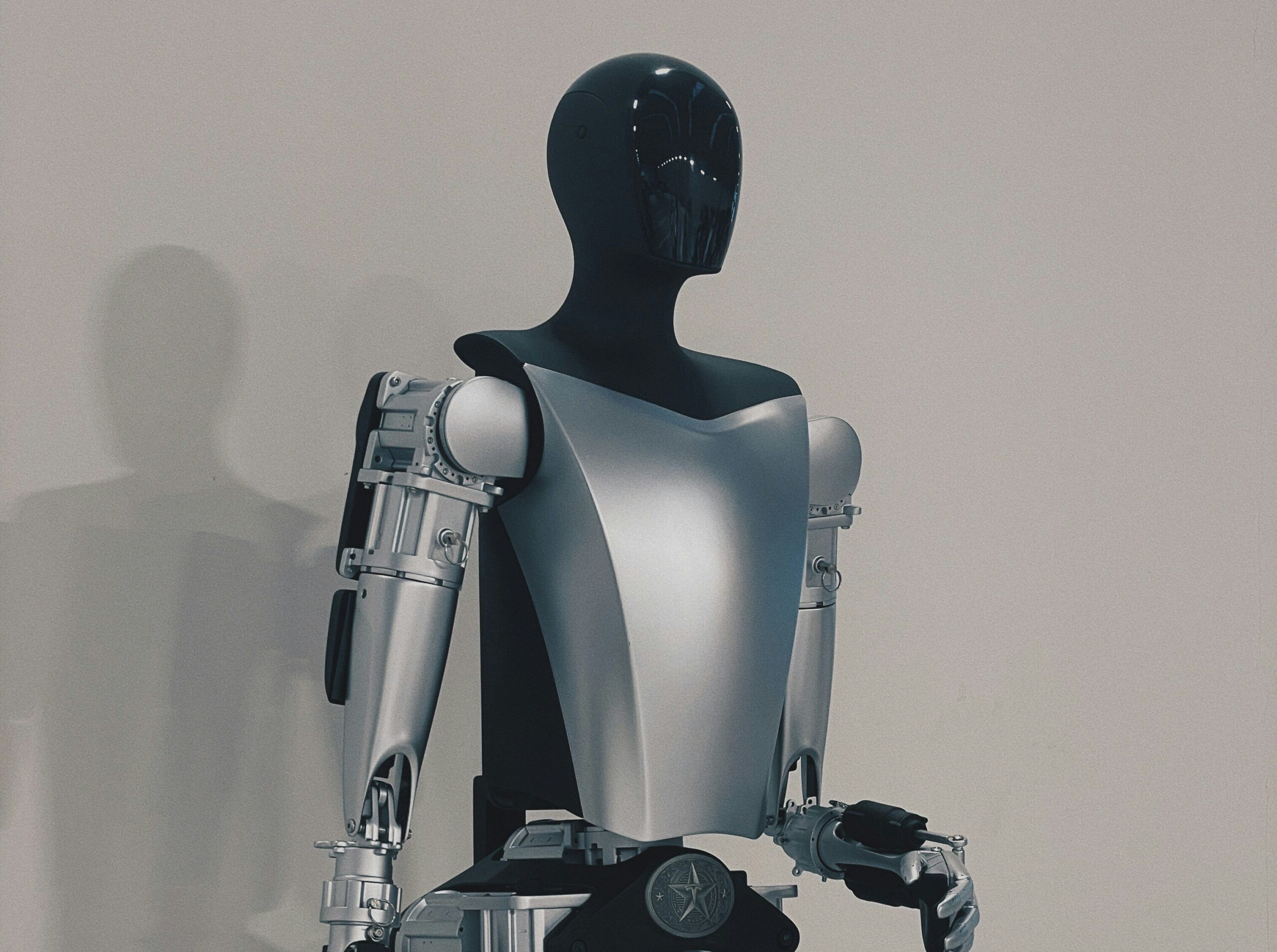

Visual-Language-Action (VLA) models represent an advanced direction in robotics, enabling robots to understand and interact with their environment. These models assist machines in distinguishing objects like raisins, green peppers, and salt shakers, as well as performing complex tasks such as folding t-shirts. At the core of VLA are transformers, which serve as the architecture for a visual-language encoder, providing deep contextual understanding.

One of the key aspects of VLA is representation learning, which allows robots to optimize their actions based on acquired data. This process includes imitation learning, where robots learn from demonstrations provided by humans, and policy optimization, enabling the creation of adaptive and effective control strategies.

An important point in the development of VLA is the use of latent representation, which is believed to be foundational to intelligence. This representation allows robots to predict the consequences of their actions, such as “if I drop the glass, it will break.” Research indicates that representation learning is becoming critically important for creating more complex and autonomous systems.

Imitation learning also plays a vital role in developing efficient locomotion mechanisms for robots. For instance, the work of Google DeepMind and DeepMimic demonstrated how learning from expert demonstrations can significantly enhance the efficiency of robot movements. This shows that using imitation in training helps robots adapt more quickly and improve their skills in complex environments.

Teleoperation is also actively used in training modern humanoids, improving the accuracy and fluidity of movements. It is important to note that teleoperation is not a negative aspect; rather, it is essential for optimizing training and forming effective control policies. By using examples of correct actions from humans, robots can learn complex tasks more quickly and enhance their performance.

Accelerating Protein Structure Prediction at Proteome-Scale

New AI Tool Optimizes Design of Morphing Robots

Related articles

Physical Intelligence unveils a universal robot brain capable of learning

Physical Intelligence presents a robot model capable of performing unfamiliar tasks.

Antioch startup develops simulation tools for robotics

Antioch develops simulation tools for robots to bridge the gap between simulation and reality.

Cadence expands AI and robotic partnerships with Nvidia and Google Cloud

Cadence Design Systems announced new partnerships with Nvidia and Google Cloud to enhance robotics and chip design.