Physical Intelligence unveils a universal robot brain capable of learning

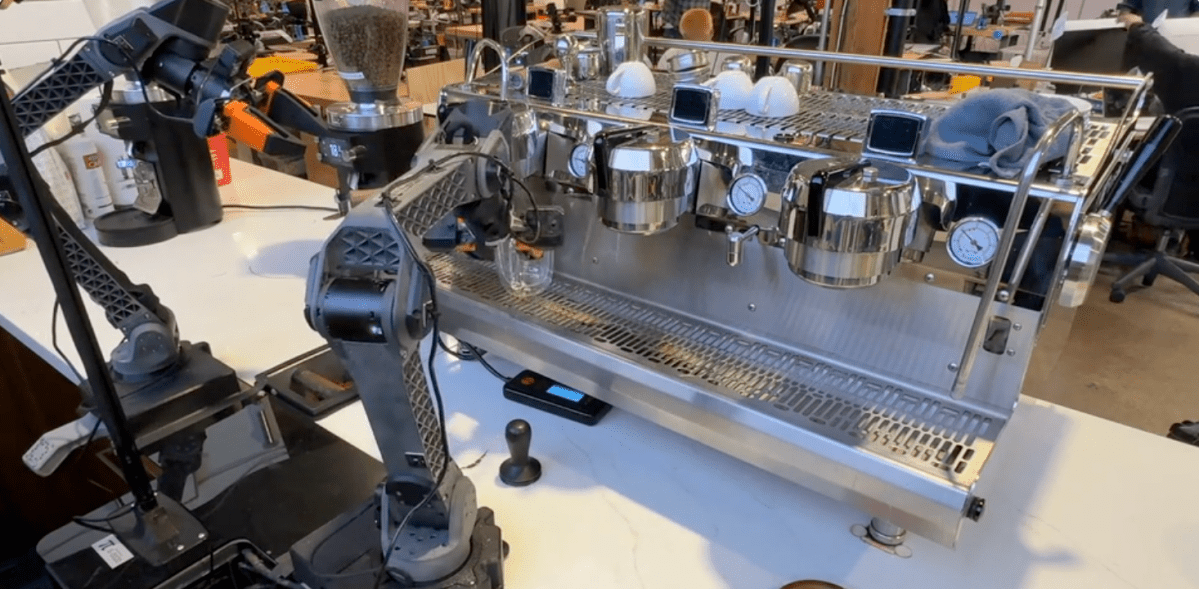

Physical Intelligence, a two-year-old robotics startup based in San Francisco, has published new research showing that its latest model can direct robots to perform tasks they were never explicitly trained on. This capability, the company's researchers say, caught them off guard. The new model, named π0.7, represents an early but significant step toward a general-purpose robot brain that can tackle unfamiliar tasks and be coached through them in plain language.

If the findings hold up, they suggest that robotic AI may be nearing an inflection point similar to what was seen with large language models, where capabilities begin to compound in ways that exceed expectations based on the data alone. The core claim in the paper is about compositional generalization—the ability to combine skills learned in different contexts to solve problems the model has never encountered.

Until now, the standard approach to robot training has been essentially rote memorization: collect data on specific tasks, train a specialized model, and repeat for every new task. π0.7, according to Physical Intelligence, breaks that pattern. Sergey Levine, a co-founder of the company and a professor at UC Berkeley, noted that once the model crosses the threshold from performing only the tasks it was trained on to remixing skills in new ways, its capabilities begin to scale more than linearly with the amount of data.

One of the most striking demonstrations involves an air fryer that the model had essentially never seen in training. The research team found only two relevant episodes in the entire training dataset. Nevertheless, the model managed to synthesize those fragments into a functional understanding of how the appliance works. Without any coaching, the model made a passable attempt at using the air fryer to cook a sweet potato. With step-by-step verbal instructions, it successfully completed the task.

This coaching capability is significant as it suggests that robots could be deployed in new environments and improved in real-time without additional data collection or model retraining. However, the researchers are cautious about the model's limitations and acknowledge their own shortcomings in prompt engineering, which affected initial success rates. After refining task explanations, success rates improved dramatically.

The model is not yet capable of executing complex multi-step tasks autonomously from a single high-level command. The team also recognized the lack of standardized benchmarks for robotics, making external validation of their claims challenging. Instead, they measured π0.7 against their previous specialized models and found that the generalist model matched their performance across various complex tasks.

What may be most notable about this research is not any single demonstration but the degree to which the results surprised the researchers, who typically know precisely what is in the training data. Levine highlighted the asymmetry between language models and robots, arguing that the distinction between an impressive demo and a system that genuinely generalizes is crucial.

OpenAI updates Codex to compete with Anthropic

Building Neural Quantum States for Frustrated Spin Systems

Related articles

Antioch startup develops simulation tools for robotics

Antioch develops simulation tools for robots to bridge the gap between simulation and reality.

Cadence expands AI and robotic partnerships with Nvidia and Google Cloud

Cadence Design Systems announced new partnerships with Nvidia and Google Cloud to enhance robotics and chip design.

Google DeepMind Introduces Gemini Robotics-ER 1.6 with Enhanced Reasoning

Google DeepMind announces the update of Gemini Robotics-ER 1.6 with new features.