Build the AI Grid with NVIDIA: Orchestrate Intelligence Everywhere

AI-native services are revealing a new bottleneck in AI infrastructure: as millions of users, agents, and devices demand access to intelligence, the challenge is shifting from peak training throughput to delivering deterministic inference at scale. At GTC 2026, NVIDIA announced that telcos and distributed cloud providers are transforming their networks into AI grids, embedding accelerated computing across a mesh of regional nodes, central offices, and edge locations to meet the needs of AI-native services.

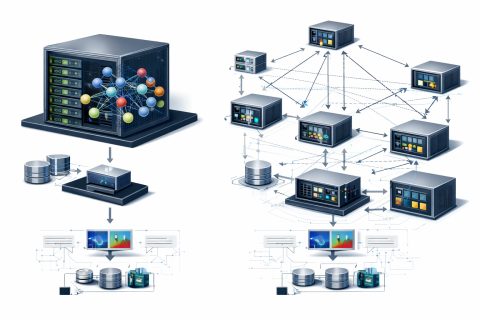

AI grids enable real-time, multi-modal, and hyper-personalized AI experiences at scale by running inference across distributed, workload-, resource-, and KPI-aware AI infrastructure. The NVIDIA AI Grid reference design provides a unified framework for building geographically distributed, interconnected, and orchestrated AI infrastructure. A key aspect of this design is the AI grid control plane, which turns siloed clusters and regions into a single programmable platform.

Intelligent workload placement is crucial for applications where latency, bandwidth, personalization, or sovereignty become first-order design constraints. For instance, applications with high latency requirements, such as physical AI (robots, sensors), conversational agents, and augmented reality, must optimize for latency and jitter. AI grids not only accelerate classical edge applications but also unlock a new set of AI-native services built around real-time generation and personalization.

One example is the use of AI grids for voice services, where latency is critical. Human-grade voice AI services are extremely sensitive to end-to-end latency, and exceeding 500 ms makes conversations feel noticeably laggy. Therefore, achieving this time-to-first-token at the client becomes a hard SLO. It is essential that AI grids deliver significant latency improvements by placing inference at regional nodes, cutting network round-trip time and reducing queuing latency.

A benchmark from Comcast shows that the AI grid deployment keeps end-to-end latency for voice interactions within a 500 ms target, even as concurrent sessions spike. This is achieved by placing inference on regional edge nodes, reducing round-trip time and queuing latency. Additionally, throughput performance improves under increased load as four edge nodes absorb demand in parallel, reaching 42,362 tokens per second at burst, an 80.9% gain over baseline.

Deploying Disaggregated LLM Inference Workloads on Kubernetes

Automating Hospitals with Robotics and Simulation

Related articles

Meta Researchers Introduce Hyperagents for Self-Improving AI

Meta researchers have introduced hyperagents that enhance AI for non-coding tasks.

OpenAI updates its Agents SDK to help enterprises build safer solutions

OpenAI has updated its Agents SDK, adding new features for businesses.

Optimizing GPU Usage for Language Models and Reducing Costs

Optimizing GPU usage for language models reduces costs and increases efficiency.